Small changes in how a question is asked can produce completely different AI answers.

Image credit: KorishTech (AI-generated)

Most people assume a simple failure pattern.

This is why AI prompt quality matters: the question does not just request an answer — it shapes the task.

If an AI answer is weak, the system must be the problem.

The model is not good enough.

The tool is unreliable.

The output cannot be trusted.

But when you look closely at how these systems behave, a different pattern appears.

The same model can produce:

- a vague answer

- a precise answer

- a completely different answer

— all from the same topic.

The system did not change.

The question did.

And that is where the failure usually begins.

The Answer Starts Before the AI Responds

AI systems do not generate answers in isolation.

They generate responses conditioned entirely on the input.

Every word is produced step by step, based on what the model has already seen — both during training and within the prompt itself. This process, known as next-token prediction, means the system is not retrieving a stored answer.

It is constructing one.

At each step, the system is not asking:

“What is the correct answer?”

It is asking:

“What is the most likely continuation of this text, given everything so far?”

That “everything so far” includes your question.

So the answer does not exist as a fixed object waiting to be retrieved.

It is built in real time from the structure of the input.

Change the input, and you change the path the system takes.

This connects directly to the idea explored in Why Small Changes in Questions Change AI Answers, where even minor variations in phrasing can shift the output entirely.

A Prompt Is Not a Question — It Is a Task Definition

When people interact with AI, they think they are asking questions.

In practice, they are defining a task.

A prompt silently encodes:

- what the system should do

- what assumptions it should make

- what level of detail is required

- how the output should be structured

If these elements are not made explicit, the system has to infer them.

And inference, in this context, is probability.

That means the model is not recovering your intent.

It is selecting the most likely interpretation of it.

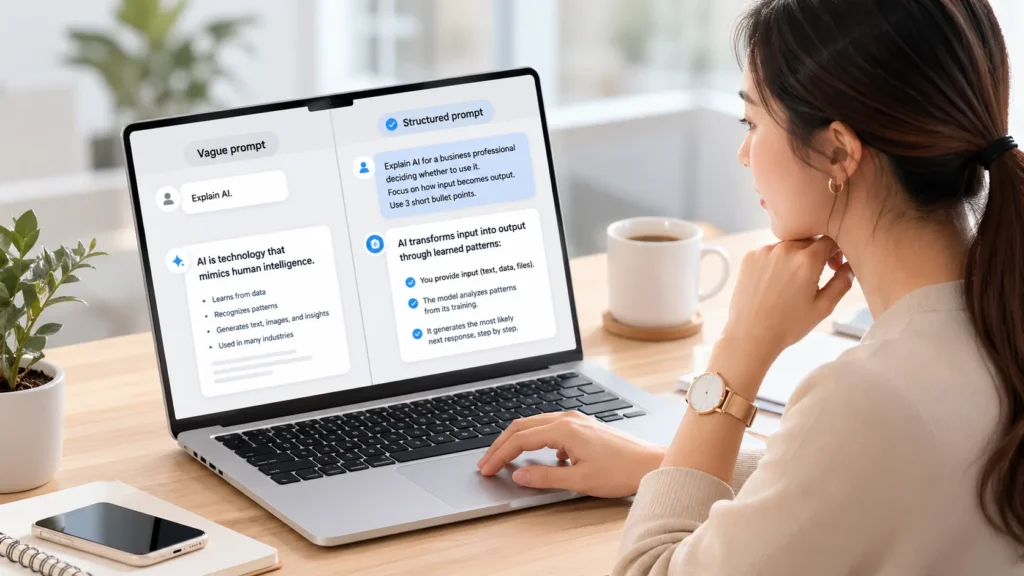

Two prompts that feel identical to a human can produce very different outputs.

Because to the system, they define different tasks.

This is where AI prompt quality actually comes from.

When the task, audience, scope, and format are made explicit, the system no longer has to guess what matters.

It simply follows the structure you have already defined.

Why Weak Questions Produce Weak Answers

When a question is vague, the system is forced into a wide solution space.

There are too many possible interpretations.

Should the answer be technical or simple?

Detailed or brief?

General or domain-specific?

Without guidance, the model resolves this uncertainty by choosing what is most broadly applicable.

That usually results in:

- safe phrasing

- generic structure

- widely acceptable explanations

The answer may sound correct.

But it is rarely useful.

A well-framed prompt does not make the system smarter.

It reduces the need for the system to guess.

One Topic, Four Prompts, Four Different Answers

This becomes clear when tested directly.

Using the same system, the same topic was asked in four different ways.

“Explain AI.”

The response was broad and general — definitions, examples, and categories.

“Explain AI for a non-technical business manager who needs to decide whether to use it at work.”

The response shifted toward decision-making — use cases, risks, and practical application.

“Explain AI in 5 short paragraphs. Focus only on how it turns input into output. Do not discuss future risks.”

The output became constrained and technical, focusing only on the mechanism.

“I am writing a blog article for general readers. Explain why AI answers depend on how the question is asked. Use one practical example and avoid technical jargon.”

The response became simplified, structured, and aligned with the intended audience.

The topic did not change.

The model did not change.

Only the prompt changed.

And that was enough to produce four different answers.

A prompt is not just a question. It is a task design.

Why the System Does Not Correct You

It is natural to expect an intelligent system to recognise a poorly formed question and fix it.

But most AI systems are not designed to do that.

They are designed to respond.

Evaluating whether a question is incomplete or ambiguous requires an additional layer of reasoning — one that is not always active.

So when a prompt is unclear, the system does not stop and ask:

“Do you mean this?”

It proceeds with the most probable interpretation and generates an answer from there.

That answer may sound confident.

But confidence reflects fluency — not correctness.

Better Questions Improve Output — But They Do Not Guarantee It

Improving a question improves the answer.

But even when the same prompt is reused, the output is not guaranteed to be identical.

This is not a flaw in how the prompt is written.

It is a property of how the system works.

AI models generate outputs by sampling from probability distributions. Even with identical inputs, small variations in generation can lead to different outputs in wording, structure, or emphasis.

On top of that, the system itself is not static.

Most AI tools apply additional layers that are not visible to the user:

- system-level instructions

- safety filters

- context shaping

These layers can change between sessions or be updated over time.

Even structured templates — designed to standardise output — cannot fully remove this effect.

They reduce variability.

They do not eliminate it.

In practice, AI prompt quality improves control over the output, but it does not remove uncertainty from the system.

This is also why improved prompts do not remove the need for judgement. As explained in Why AI Gives Confident Answers Even When It Is Wrong, fluent responses can still appear reliable even when they are not.

My Take

There is a growing belief that better results come from giving AI a clear persona.

“Act as a business analyst.”

“Respond like a teacher.”

“Write like a consultant.”

This works.

But not for the reason most people assume.

A persona does not give the system identity.

It gives the system constraints.

It narrows how the output should be generated — tone, structure, perspective. In that sense, it is a form of input framing, not memory.

And that distinction matters.

Because even with a well-defined persona, the system does not maintain continuity in the way a human would.

Each interaction is generated from the prompt and the immediate context.

If that context shifts, the behaviour shifts with it.

This is why modern AI systems are evolving beyond simple prompts.

Instead of relying on a single input, they use layered structures:

- persistent system instructions

- memory modules

- structured workflows

- validation layers

These are not improvements to the model alone.

They are attempts to stabilise behaviour around it.

The direction is clear.

AI is moving from isolated responses toward controlled systems.

But at the core, the behaviour remains the same.

It is generated.

It is conditional.

And it still depends on how the task is defined at the beginning.

Sources

- MIT Sloan Management Review — Generative AI results depend on user prompts as much as models

https://mitsloan.mit.edu/ideas-made-to-matter/study-generative-ai-results-depend-user-prompts-much-models - IBM — What are large language models

https://www.ibm.com/think/topics/large-language-models - AWS — What is prompt engineering

https://aws.amazon.com/what-is/prompt-engineering/ - Nielsen Norman Group — Prompt structure and AI response behaviour

https://www.nngroup.com/articles/ai-prompt-structure/ - Harvard University Information Technology — AI prompts and usage

https://www.huit.harvard.edu/news/ai-prompts - Nature (LLM research and generation behaviour)

https://www.nature.com/articles/s41586-025-10041-x - ACL / arXiv research papers on prompt sensitivity, ambiguity, and generation behaviour (multiple studies referenced in research set)