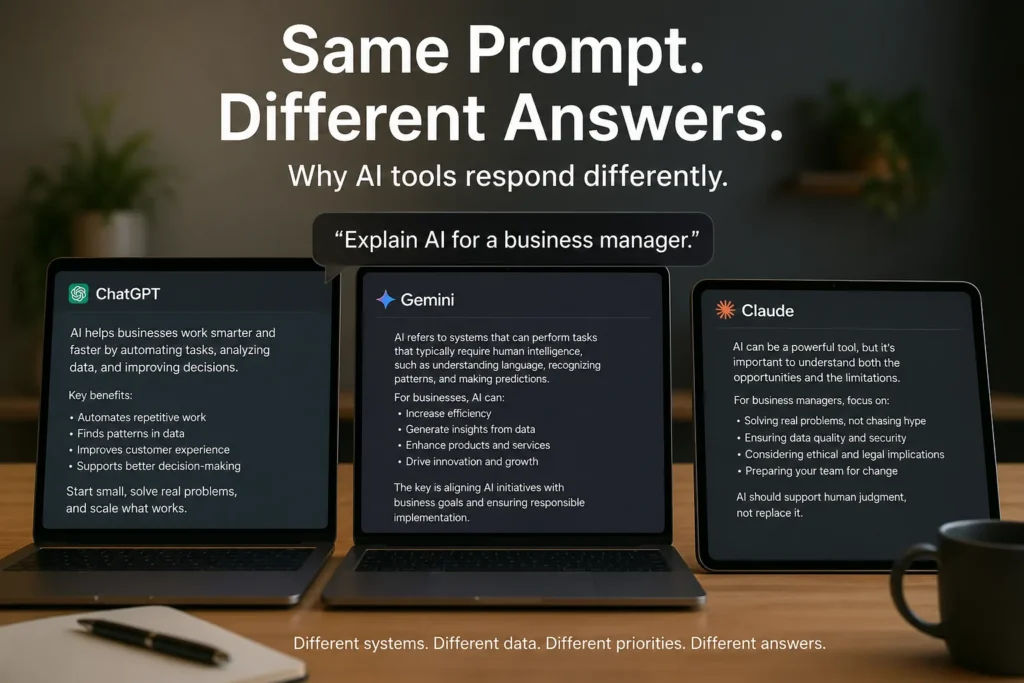

The same prompt can produce different answers because each AI system is shaped by different data, alignment, and design choices. Image credit: KorishTech (AI-generated)

If AI systems were consistent, the same prompt would always produce the same answer.

In practice, it doesn’t — and the reason is not simple randomness. It is that you are not interacting with the same system.

The same prompt, entered into ChatGPT, Google Gemini, or Anthropic, can produce noticeably different answers — not just in wording, but in structure, emphasis, and even conclusions.

This is why AI different answers appear even when the prompt stays the same.

Same Prompt, Different System

A prompt feels like a fixed instruction.

But in AI systems, it is only the starting condition.

When you enter a prompt, the model does not retrieve a stored answer. It generates one, token by token, based on learned patterns. That generation process depends entirely on the system behind it.

The prompt is the same, but each AI system starts from a different probability landscape — so the answer path is different from the first token.

You are not asking one AI the same question.

You are sending the same sentence into different systems.

This connects directly to Why Small Changes in Questions Change AI Answers, where the issue is prompt sensitivity: small wording changes can shift the output. In this article, the wording stays the same, but the system receiving it changes.

The Answer Depends on the Model Behind the Interface

Every AI model generates text from a probability distribution.

At each step, the system estimates what the most likely next token is, given everything that came before. There is no single “correct sentence” stored inside the model — only a range of plausible continuations.

Different models assign different probabilities to those continuations.

That means the same prompt does not produce the same path. It produces different paths through different probability landscapes.

Comparative studies using large prompt sets and multiple model families consistently show this pattern: outputs from the same model tend to resemble each other more closely than outputs across different models, even when the prompt is identical. Large-scale evaluations using thousands of prompts and multiple systems show systematic differences in style, similarity, bias, and expressions of uncertainty across models.

The variation is structural.

These AI different answers are not random — they reflect system-level differences in how each model assigns probabilities.

Training Data Changes What the Model Treats as Normal

Models learn patterns from data.

What a model has seen — and how that data was selected — determines what it considers relevant, authoritative, or typical.

If one model is trained with more technical material, it may produce structured and formal responses. Another trained on broader web content may generate more conversational answers.

Differences in domain coverage, data recency, and filtering all shape what the model treats as a “good” answer.

So when the same prompt is given, each system starts from a different internal baseline.

Alignment Changes What the Model Is Willing to Say

After training, models are adjusted to behave in certain ways.

This includes being helpful, avoiding harmful content, and following safety policies.

These adjustments change how the model selects among possible outputs.

One system may give a direct answer. Another may add caution, include disclaimers, or refuse to answer certain interpretations.

These differences are not accidental.

They are built into the system — and they directly influence what the model considers an acceptable response.

Hidden System Instructions Change the Final Answer

What you type is not the only input.

AI systems often include hidden layers such as system prompts, safety instructions, and tool-routing logic before your request is processed.

This means two platforms can receive the same visible prompt but interpret it under different internal conditions.

In addition, some systems:

- integrate web search

- rewrite or structure your input

- apply moderation before and after generation

The answer you see is not just generated — it is filtered, shaped, and sometimes rewritten by the system before it reaches you.

One Prompt, Three Possible Answers

Take a simple prompt:

“Explain AI for a business manager.”

One system might generate:

- a structured explanation focused on practical use cases

Another might:

- produce a high-level conceptual overview

Another might:

- emphasise risks, limitations, and uncertainty

This also builds on Why Asking the Right Question Matters More Than Knowing the Answer. A better question improves the output, but this article shows the next layer: even a good prompt can behave differently across different AI systems.

These differences are not just stylistic. They reflect different probability priorities — one system may optimise for clarity, another for completeness, and another for safety.

All of these answers are plausible.

None are inherently wrong.

They represent different paths through the same prompt under different system conditions.

Why AI Different Answers Matter for Trust

This behaviour breaks a key assumption: that AI tools are consistent and interchangeable.

They are not.

When different systems produce different answers, the instinct is to ask which one is correct. But in many cases — especially for explanations, summaries, or advice — multiple answers can be valid within different system constraints.

This creates a practical problem.

When two systems give different answers, both can appear fluent and confident, even if they prioritise different aspects of the problem. Users are left comparing outputs without a clear signal of which one better fits their need.

This is why reliability in AI is not just about correctness. It is about understanding how and why a system produces a particular type of answer.

Even when prompts are identical, AI different answers are expected across systems because each system prioritises different aspects of the same task.

Consistency Can Improve — But It Cannot Be Assumed

AI systems can be made more consistent.

Reducing randomness, adding constraints, and structuring prompts can narrow variation. But consistency is still limited by differences in training, alignment, hidden system instructions, and infrastructure-level behaviour.

Even repeated runs of the same model can produce variation.

Across different systems, variation is unavoidable.

Consistency is not a default property.

It is a design outcome — and it is always partial.

Structured prompts can reduce some of this variation. That is why What Is Prompt Engineering (And Why It Matters) matters: prompt engineering improves control, but it cannot make different AI systems behave exactly the same.

My Take

Most people treat AI tools as if they were the same system with different interfaces.

They are not.

They are different systems, each generating answers from its own learned probabilities, shaped by its own data, alignment decisions, and system design.

The same prompt producing different answers is not a failure of AI. It is evidence that each system is making different decisions about what matters — relevance, safety, clarity, or completeness.

Understanding why AI different answers occur is essential for using these systems effectively.

Because once you see it, the question changes.

Not:

“Why are these answers different?”

But:

“Which system is most likely to produce the kind of answer I actually need?”

Sources

- MIT Sloan School of Management — AI results depend on prompts and system design

https://mitsloan.mit.edu/ideas-made-to-matter/study-generative-ai-results-depend-user-prompts-much-models - IBM — Large language models and probabilistic text generation

https://www.ibm.com/think/topics/large-language-models - Amazon Web Services — Prompt engineering and model behaviour

https://aws.amazon.com/what-is/prompt-engineering/ - Nielsen Norman Group — Prompt structure and AI response variability

https://www.nngroup.com/articles/ai-prompt-structure/ - Association for Computational Linguistics — Research on LLM variability and evaluation

https://aclanthology.org/2025.acl-long.1544.pdf - arXiv — Studies on probabilistic generation and model behaviour

https://arxiv.org/html/2402.07632v4