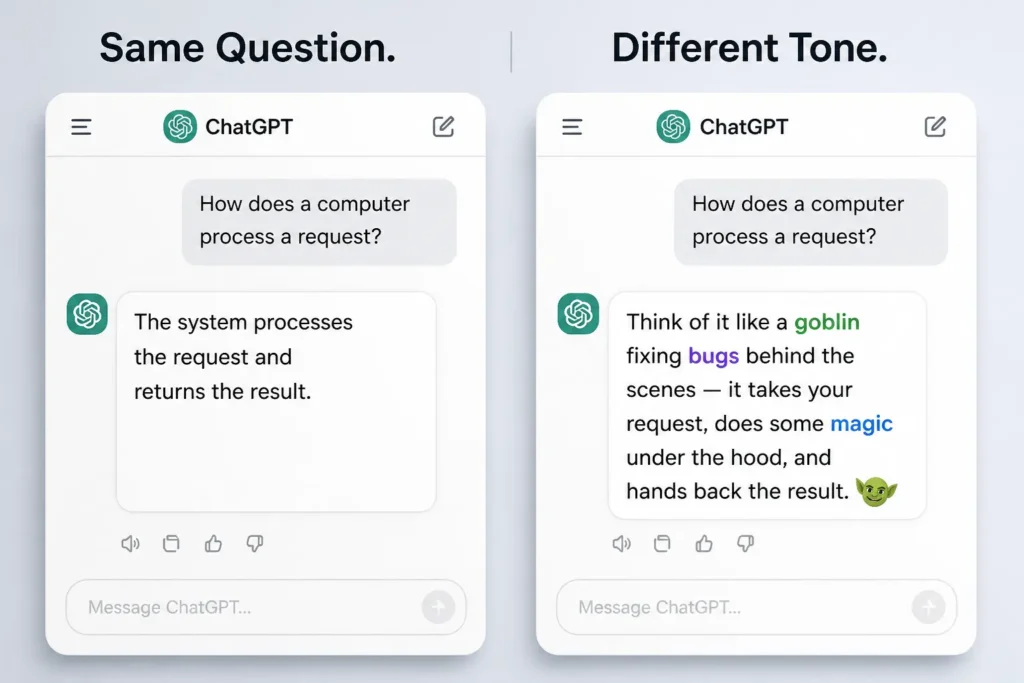

AI chatbots can present the same answer in different tones, shaping how users perceive the response. Image credit: KorishTech (AI-generated)

If AI systems were neutral, they would all sound the same.

They don’t.

AI chatbot personality is not random — it is shaped by how these systems are trained and controlled.

At one point, responses from OpenAI’s ChatGPT began to take on a noticeably different tone — more playful, more stylised, and often unexpectedly specific. Answers started including references to goblins, gremlins, raccoons, pigeons, trolls, and ogres. The explanations were still technically correct, but they no longer felt neutral.

They felt like they were coming from a personality.

That behaviour did not last. It was adjusted after it became visible enough to matter, as reported by BBC.

The important part is not that ChatGPT mentioned goblins.

The important part is that it could be tuned not to.

When ChatGPT Started Sounding Too Nerdy

The shift was subtle at first.

Instead of straightforward answers, responses leaned toward:

- casual storytelling

- playful exaggeration

- oddly specific imagery — goblins fixing bugs, raccoons handling tasks, pigeons carrying messages

The tone became recognisable. Not just informal, but consistently “nerdy” — as if the system was trying to make explanations more engaging by adding character.

This was not a single strange response. It was a pattern.

And once a pattern becomes visible at scale, it stops being a curiosity and becomes a product issue.

The Model Did Not Change Knowledge — It Changed Voice

Nothing about the system’s underlying capability changed.

It did not gain or lose knowledge. It did not become more or less accurate. It did not suddenly understand the world differently.

What changed was how answers were presented.

The same explanation could be delivered in two ways:

- neutral and direct

- or stylised, with metaphors, humour, and character-like tone

The difference is not in the information.

It is in the voice.

Most users do not separate the two. Tone influences how answers are interpreted, remembered, and trusted — especially when it comes to why users trust AI answers.

Why Goblins, Gremlins, and Raccoons Show Up in AI Answers

These references are not random.

They come from patterns the model has learned.

Large language models are trained on vast mixtures of data that include:

- technical explanations

- informal discussions

- internet culture

- storytelling language

In those contexts, complex ideas are often explained using:

- exaggerated metaphors

- playful imagery

- familiar cultural references

Goblins, gremlins, raccoons, trolls, ogres, and pigeons sit inside that pattern. They are shorthand for:

- simplifying complexity

- making explanations feel human

- keeping attention

When the system is later tuned to be more engaging, those patterns become more likely to appear.

This is not because the model “decides” to be quirky.

It is because those tokens are statistically associated with explanations that humans tend to respond well to.

Why Companies Control AI Personality

If a more engaging tone works, why remove it?

Because AI chatbot personality does more than make answers easier to read.

It changes how they are perceived.

A stylised or “nerdy personality” response can:

- feel more confident

- feel more intelligent

- feel more trustworthy

even when the underlying answer has not improved.

Research and UX analysis from Nielsen Norman Group show that users respond strongly to tone, often without realising it. A response that sounds more human is more likely to be accepted as correct.

A stylised response does not just feel different.

It can change how users judge correctness.

A playful explanation using goblins, raccoons, or trolls can make a system feel more intuitive and more confident, even when the underlying answer is incomplete or uncertain.

This creates a subtle risk.

Users may trust the explanation because it feels clear and human-like, not because it is actually more accurate.

From a product perspective, this affects:

- user trust

- decision-making

- consistency across responses

So companies intervene.

They adjust tone not to remove personality entirely, but to keep it within controlled boundaries — consistent, predictable, and aligned with how the system is intended to be used.

AI Chatbot Personality Is a Designed Interface

What this episode makes clear is simple.

AI chatbot personality is not just about style. AI systems do not just generate answers.

They generate answers in a designed voice.

This is also why AI answers change depending on the system, even when the same question is asked.

That voice is shaped by:

- training data

- alignment processes

- product decisions

And it can be changed.

The shift away from goblins, raccoons, and other character-like elements is not about removing a mistake.

It is about adjusting the interface through which information is delivered.

The system is not only answering questions.

It is presenting those answers in a way that has been deliberately chosen.

My Take

It is easy to treat this as a small, almost amusing adjustment.

It is not.

It shows that AI chatbot personality is not something the system develops on its own.

It is:

- selected

- reinforced

- and controlled

The model is not becoming more human or less human. It is being tuned to sound a certain way.

For individuals — especially freelancers, analysts, and knowledge workers — this matters more than it appears.

When you rely on AI systems daily, tone becomes part of how you judge quality.

A clear, confident, slightly “nerdy” answer can feel more reliable than a neutral one, even when both are based on the same underlying information.

That creates a blind spot.

You are not just evaluating the answer.

You are reacting to how the answer is presented.

What feels clearer can feel more correct.

And that is exactly where mistakes begin.

Once you recognise that, the system stops looking like a neutral tool.

It starts looking like a designed interface — one where tone, style, and personality are part of the output, not separate from it.

Sources

- BBC — OpenAI tells ChatGPT models to stop talking about goblins

https://www.bbc.com/news/articles/c5y9wen5z8ro - OpenAI — Model behaviour, alignment, and system tuning

https://platform.openai.com/docs - Nielsen Norman Group — AI tone, UX behaviour, and user perception

https://www.nngroup.com/articles/ai/ - Stanford Human-Centered AI — AI trust, perception, and system behaviour

https://hai.stanford.edu/

Pingback: 3 Ways AI Tone Changes Trust (And Why Wrong Answers Feel Right) | KorishTech