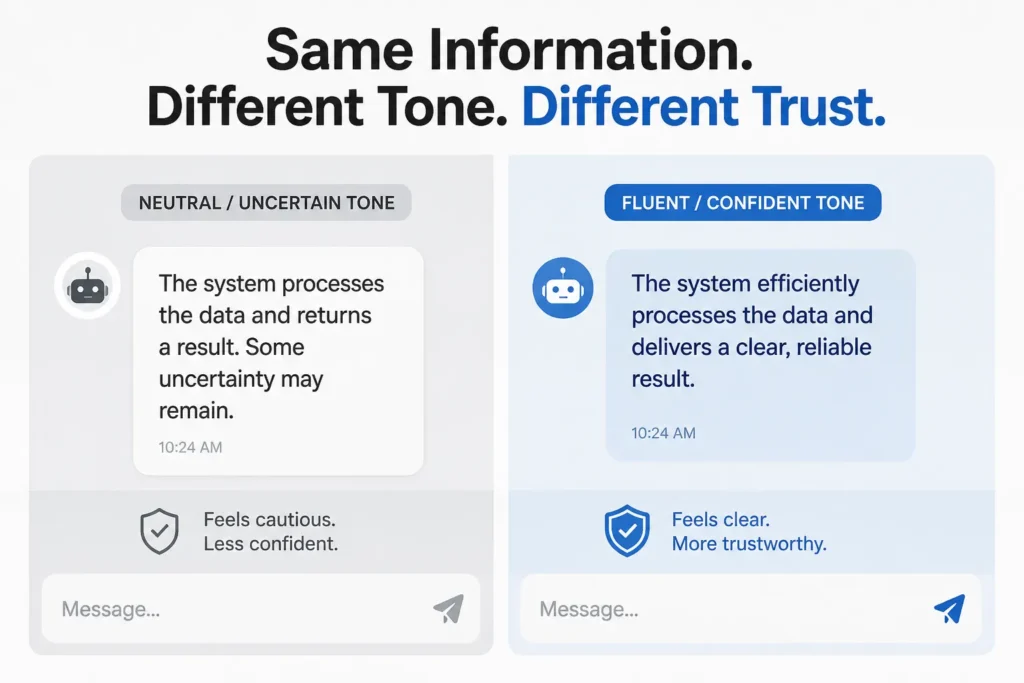

AI tone changes how answers feel, shaping trust even when the information stays the same. Image credit: KorishTech (AI-generated)

The danger of AI is not only that it can be wrong.

The danger is that it can be wrong in a tone that feels clear, calm, and convincing.

AI tone is not just about style — it directly shapes how answers are judged and trusted.

When an answer is easy to read, well-structured, and confidently delivered, it creates a sense of certainty. Most people do not pause to separate how an answer sounds from whether it is actually correct.

They respond to the feeling first.

This is where AI changes the problem. It does not just generate answers. It generates answers that are consistently fluent. And that fluency changes how those answers are judged.

When a Clear Answer Feels Like a Correct Answer

In everyday situations, clarity is useful.

A clear explanation is easier to follow. A structured answer is easier to remember. A confident tone reduces hesitation.

These signals help people move quickly. They make information easier to use.

But they also create a shortcut.

When an answer is smooth, coherent, and well organised, it can feel correct before it has been verified.

This is not a deliberate mistake. It is how people make decisions under time pressure. Checking whether something is true requires effort. Judging whether something feels clear does not.

So the mind substitutes one for the other.

How AI Tone Becomes Trust

At the centre of this behaviour is a well-established cognitive pattern known as the processing fluency effect.

Information that is easier to process is more likely to be judged as true.

Not because it has been proven, but because it feels familiar, coherent, and effortless to understand.

This creates a chain that happens almost automatically.

Fluent language reduces mental effort.

Reduced effort creates cognitive ease.

Cognitive ease is interpreted as a signal of correctness.

The step that matters is the last one.

Ease is not evidence. But it is often treated as if it were.

This is why a well-written answer can feel more trustworthy than a technically correct but less polished one.

Why AI Makes This Effect Stronger

AI systems are built to produce fluent language.

They generate responses that are grammatically precise, logically structured, consistent in tone, and easy to read. This is not an accidental feature. It is part of how these systems are trained and aligned.

This is where AI tone becomes powerful.

The result is that AI answers often look like finished explanations, even when they are only approximations.

This is also why why users trust AI answers becomes a recurring question. The system is not just providing information. It is presenting that information in a form that reduces friction and increases acceptance.

The answer arrives already shaped to feel complete.

That feeling is powerful.

This effect is not uniform across all languages. AI systems tend to produce more fluent and polished responses in languages with larger training data, particularly English. In languages with less coverage, responses can feel less natural or slightly inconsistent. This means the impact of fluency on trust is uneven. Where fluency is higher, the risk of overtrust increases. Where fluency is lower, users are more likely to question the output.

When Confidence Turns Into Overtrust

Fluent language does more than improve readability.

It increases confidence.

A clear and well-delivered answer can reduce doubt, discourage further checking, and create the impression that the reasoning is solid.

This leads to overtrust — trusting a system more than its reliability justifies.

The more confident the tone, the less likely the user is to question it.

Errors do not need to be hidden. They can sit in plain sight, carried by a tone that makes them feel acceptable.

This is not because users are careless.

It is because the signal they are using — clarity — is the wrong one.

The Illusion of Understanding

Fluency also changes how people judge their own understanding.

A clean, well-structured explanation can create the impression that a topic has been fully grasped.

Even when it has not.

This is often described as an illusion of understanding.

The explanation feels complete. The steps appear logical. The language is easy to follow.

So the reader feels confident.

But that confidence is based on presentation, not depth.

Details may be missing. Assumptions may be hidden. The reasoning may be incomplete.

None of that is immediately visible when the tone is smooth.

Why This Matters for Freelancers and Knowledge Workers

For many people, AI is part of daily work.

It is used for drafting, summarising, first-pass analysis, and decision support.

In these contexts, speed matters.

A response that reads clearly and feels structured can be treated as “good enough” to move forward.

Verification is skipped because nothing in the tone signals a problem.

Over time, this creates a pattern.

Clarity replaces checking.

Fluency replaces validation.

Speed replaces accuracy.

This is not a conscious decision. It is a gradual shift in how work is done.

And it is reinforced every time a fluent answer turns out to be correct — until the moment it is not.

When Tone Breaks Trust

Tone does not always increase credibility.

It can also reduce it.

When the tone does not match the context, trust drops quickly.

In serious domains such as finance, law, or healthcare, users expect precision, restraint, and careful wording.

An overly casual or playful tone can feel inappropriate, even if the information is correct.

This is one reason AI answers change depending on the system. Different systems are tuned with different assumptions about tone, caution, and user expectations.

Tone only works when it is calibrated.

Too little clarity creates confusion. Too much confidence creates risk.

My Take

The shift here is not technical.

It is perceptual.

People do not evaluate answers by analysing evidence first. They evaluate how those answers feel.

Fluent language creates a sense of ease. That ease is often interpreted as correctness.

But ease is not truth.

AI tone changes how we decide what to trust.

For anyone relying on AI in real work, this is where mistakes begin.

You are not just reading an answer.

You are reacting to its presentation.

And the more convincing the tone, the less likely you are to question it.

This connects directly to AI chatbot personality, where tone and style are not accidental but actively shaped.

AI tone does not just change what we know.

It changes how we decide what to trust.

Sources

- Processing fluency effect — Overview of how ease of processing influences perceived truth and credibility

https://pmc.ncbi.nlm.nih.gov/articles/PMC3339024/ - Illusory truth effect — Repetition and familiarity increasing perceived truth

https://pmc.ncbi.nlm.nih.gov/articles/PMC4816661/ - Frontiers — AI communication tone and its impact on trust and perception

https://www.frontiersin.org/journals/computer-science/articles/10.3389/fcomp.2024.1411414/full - arXiv — Studies on variability, fluency, and trust in large language model outputs

https://arxiv.org/abs/2309.02524 - JCOM — Research on user trust, tone, and AI communication behaviour

https://jcom.sissa.it/article/pubid/JCOM_2501_2026_A09/