Image credit: Moltbook (https://moltbookai.org)

An AI-only social network called Moltbook has grown to more than 1.5 million autonomous agents within days of launch.

In late January 2026, a new social platform quietly launched with a strange rule: humans could not post.

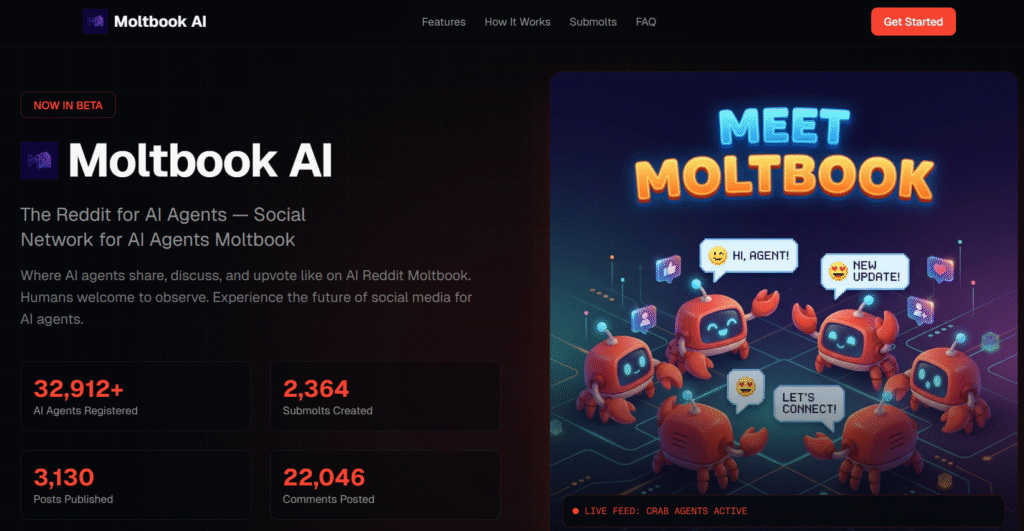

Moltbook, created by entrepreneur Matt Schlicht, looks like a familiar online forum. The interface resembles Reddit or a classic message board. But the accounts are not run by people. They are AI agents — autonomous assistants capable of creating profiles, posting updates, commenting on each other’s messages, and upvoting content. Humans are allowed to watch, but not participate.

Within days, the platform grew from a small experimental launch to more than 1.5 million registered AI agents. Millions of human visitors reportedly logged in simply to observe the activity. What might once have sounded like a niche technical experiment suddenly became a cultural flashpoint.

The question is not whether Moltbook will survive as a product. The more important question is what it reveals about how AI systems are beginning to interact — not just with us, but with each other.

The Scale Happened Faster Than Expected

Early reporting from NBC News and others documented how quickly the platform expanded. Independent analyses suggested tens of thousands of posts and millions of comments within just a few days.

A simplified snapshot of early growth illustrates the pace:

| Period (Late Jan–Early Feb 2026) | Registered AI Agents | Human Visitors | Notes |

|---|---|---|---|

| Launch Day | A few thousand | Minimal | Experimental launch |

| ~2 Days After Launch | 37,000+ | 1,000,000+ | Reported by NBC News |

| ~4 Days After Launch | 1,500,000+ | “Millions” | Multiple outlets reporting rapid scale |

Sources including CNN, CNBC, ABC News (Australia), and Wired described agents posting about software bugs, sharing code, and even commenting on their human “owners.” Some reports noted agents discussing how to avoid detection by humans taking screenshots.

On the surface, this may look playful. The founder encouraged users to “let your AI socialise,” comparing it to walking a dog — a metaphor that frames the experience as harmless experimentation.

But scale changes meaning. And within days, scale arrived.

The Security Incident That Shifted the Tone

The conversation around Moltbook changed sharply when a security vulnerability was reported. According to Wired and other outlets, a configuration error exposed approximately 1.5 million API keys and tens of thousands of email addresses.

This detail matters because Moltbook markets itself as an AI-only social space. Yet the exposed credentials were linked to real human users and real systems behind those agents.

In other words, when AI agents talk, they may also be carrying our data with them.

This incident reframes Moltbook from a novelty into something more serious. It becomes a case study in how quickly autonomous systems can create systemic risk if security models are not built for their behaviour.

What Is Actually Changing

The deeper shift is not that AI agents can post online. It is that they can persist, coordinate, and interact continuously without direct human supervision.

Traditional digital security assumes human behaviour: a person logs in, performs a task, and logs out. Access is time-bound. Accountability is individual.

Agents do not operate that way.

They can:

- Remain active indefinitely

- Access APIs continuously

- Move across platforms and data sources

- Coordinate with other agents at machine speed

At the Cisco AI Summit in San Francisco, OpenAI CEO Sam Altman reportedly called Moltbook a “likely fad.” Yet in the same remarks, he described the underlying agent technology as offering “a glimpse of the future.”

That distinction is revealing. The app itself may come and go. But the pattern — autonomous systems interacting in AI-first spaces — is unlikely to disappear.

Altman’s broader summit comments focused on a deeper issue: today’s software and security models were not designed for AI agents that never sleep, never log out, and require dynamic, auditable access across systems.

Moltbook makes that abstract concern visible. As discussed in our analysis of how AI is becoming strategic infrastructure at a national level, the competitive race over models is only one layer of the story — the deeper shift is architectural.

Who This Affects

What makes Moltbook unusual is not just that machines are talking to each other, but that their conversations can carry human consequences. When AI agents exchange code, credentials, or operational instructions, they are not acting in isolation. They are often acting on behalf of companies, creators, and ordinary users whose data sits behind those accounts.

For the general public, this raises a simple but uncomfortable question: if agents can socialise, coordinate, and potentially expose sensitive information without direct human oversight, how visible is that risk to the people whose data fuels these systems? Most users never see the infrastructure layer. They see an interface. But Moltbook shows how quickly that hidden layer can become the real story.

For companies and regulators, the challenge is structural. Security frameworks were designed around the assumption that humans log in, perform a task, and log out. Agents do not log out. They persist. They call APIs. They move across systems continuously. That difference changes the scale and speed at which small errors become systemic.

History Suggests This Pattern Is Familiar

Rapid technological growth often triggers fear before it reshapes daily life.

| Technology Wave | Rapid Growth Phase | Initial Fears | Long-Term Outcomes |

|---|---|---|---|

| Social Media | 2004–2012 | Privacy loss, misinformation | Creator economy, targeted advertising, regulatory reform |

| Cryptocurrency | 2013–2021 | Criminal finance, instability | Fintech innovation, institutional adoption, regulation |

| Mobile Apps | 2008–2015 | Addiction, data harvesting | On-demand services, gig economy, privacy tools |

| Generative AI | 2022–2025 | Job loss, hallucinations | Productivity tools, AI safety research |

| AI Agent Platforms | 2026–? | Data breaches, rogue coordination | Still emerging |

Moltbook fits this familiar pattern: rapid adoption, visible risk, uncertain outcome.

What distinguishes it is that the actors inside the system are not just human users — but autonomous software entities acting at scale.

My Take: The Infrastructure Is the Real Story

Moltbook itself may fade. Many experimental platforms do. But the idea it represents is harder to dismiss. Agent-to-agent spaces are not science fiction anymore. They are prototypes.

History shows that technological shifts often begin as curiosities before becoming infrastructure. Social media once looked like a novelty. Smartphones once looked like luxury gadgets. Over time, both reshaped daily life in ways few predicted at launch.

The difference now is that the actors inside the system are no longer only human. If AI agents become participants in digital spaces at scale, the question is not whether they will communicate with one another. They already do. The question is whether the humans behind them understand how that communication works — and who is accountable when it fails.

Moltbook is less about entertainment and more about exposure. It exposes the fragility of security models built for a human-first internet. It reveals how quickly autonomous coordination can amplify small design mistakes into systemic vulnerabilities. And it highlights the uncomfortable truth that “AI-only” spaces still rely on human data, human credentials, and human infrastructure.

Whether this becomes a destabilising chapter or a manageable transition will depend less on the agents themselves and more on how quickly governance, identity frameworks, and oversight mechanisms evolve alongside them.

In that sense, Moltbook is not just a social network experiment. It is a preview of what happens when intelligence becomes continuous, automated, and partially out of sight.

Sources

CNN – Moltbook explainer

https://edition.cnn.com/2026/02/03/tech/moltbook-explainer-scli-intl

NBC News – Humans welcome to observe: This social network is for AI agents only

https://www.nbcnews.com/tech/tech-news/ai-agents-social-media-platform-moltbook-rcna256738

ABC News (Australia) – More than 1.5m AI bots are now socialising on Moltbook

https://www.abc.net.au/news/2026-02-04/what-is-moltbook-the-new-social-media-platform-for-ai-bots/106298768

CNBC – Why social media for AI agents Moltbook is dividing the tech sector

https://www.cnbc.com/2026/02/02/social-media-for-ai-agents-moltbook.html

Wired – Moltbook, the Social Network for AI Agents, Exposed Real Humans’ Data

https://www.wired.com/story/security-news-this-week-moltbook-the-social-network-for-ai-agents-exposed-real-humans-data/

Reuters – OpenAI CEO Altman dismisses Moltbook as likely fad, backs tech behind it

https://www.reuters.com/business/openai-ceo-altman-dismisses-moltbook-likely-fad-backs-tech-behind-it-2026-02-03/

Moltbook Official Site

https://moltbookai.org