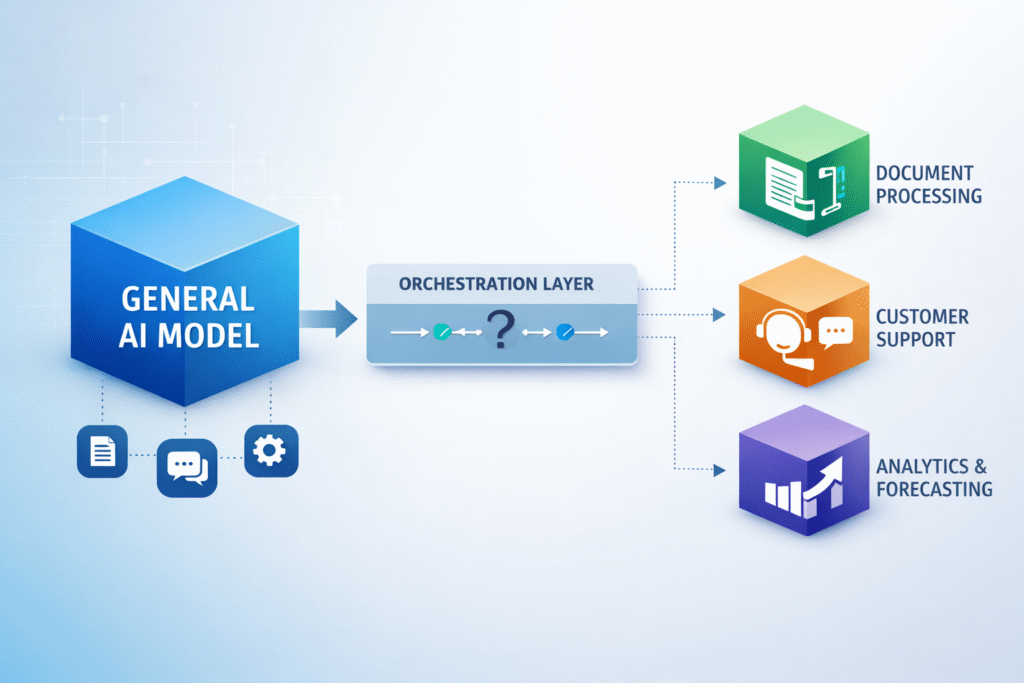

AI systems are shifting from a single general model to multiple specialised models coordinated within a structured system. Image credit: KorishTech (AI-generated).

AI model customization is becoming an architectural requirement, not an optimisation.

A recent MIT Technology Review analysis highlights a shift already visible in production systems: companies are moving away from relying on a single general-purpose model and are instead building systems composed of multiple task-specific models.

This shift is not driven by better ideas.

It is forced by constraints.

At scale, cost, reliability, and performance are no longer trade-offs. They are requirements. A model that is broadly capable but inconsistent or expensive cannot sustain production systems.

That is why the architecture is changing.

General Models Work Broadly — But Fail Narrowly

General-purpose models are designed to handle many types of tasks.

They can generate text, summarise content, and respond across domains. That breadth is useful, but it comes with a limitation: the model is not optimised for any one task.

In production systems, the requirement is not breadth.

It is consistency and repeatability.

A customer support system does not need a model that can answer anything. It needs a system that can produce the correct answer in a defined format, using the right terminology, every time. More importantly, that behaviour must be reproducible across thousands of similar cases and adaptable when underlying data, rules, or policies change.

This is where general models become volatile.

They are capable, but not controlled enough for structured workflows where reliability, reuse, and error handling matter more than flexibility.

Constraints Force Specialisation

The shift toward customisation is driven by three constraints: cost, performance, and reliability.

Gartner predicts that by 2027, organizations will use small, task-specific AI models at least three times more than general-purpose large models. The reason is straightforward: specialised systems are faster, cheaper, and more accurate when operating within defined boundaries.

Large models introduce two consistent problems at scale. The first is cost. Each request consumes significant compute, and this becomes expensive when repeated across high volumes. The second is variability. Even when answers are technically correct, they may not follow the required structure, tone, or level of precision.

That variability creates downstream cost. Outputs must be retried, corrected, or reviewed, and over time the cost of inconsistency can exceed the cost of computation itself.

Customisation reduces this by narrowing the system’s behaviour. Instead of trying to solve every problem, the model is aligned to a specific role, making outputs more predictable and easier to control.

This follows the same shift described in Why AI Is Moving From Bigger Models to More Efficient Models, where AI progress is no longer defined by scale alone, but by how efficiently systems perform under real constraints.

AI model customization emerges from this constraint-driven shift, where systems are designed for control rather than general capability.

This follows the same pattern seen in other engineered systems. As constraints increase, systems become narrower, more specialised, and more reliable.

From One Model to Task-Specific Systems

Once constraints become real, a single model stops being an effective design choice.

Different parts of a workflow demand different characteristics. Some steps require low latency and minimal cost, while others require strict formatting, domain knowledge, or deeper reasoning when inputs become ambiguous. Forcing one model to satisfy all of these simultaneously introduces trade-offs that are difficult to manage.

As a result, systems are being restructured around tasks rather than models.

A production workflow typically operates as a sequence. A request is first interpreted, then enriched with relevant context if needed, processed by a model suited to that task, and finally checked before returning an output. Each step is handled by a component that is optimised for that role.

This is already reflected in enterprise platforms, where multiple models are deployed within a single system and assigned clearly defined responsibilities.

The model is no longer the system.

It is one part of it.

Orchestration Replaces the Single-Model Approach

When multiple models are used, coordination becomes the central problem.

Orchestration is the layer that manages this coordination. It determines which task is being performed, which model should handle it, and how information flows between different steps.

In practice, orchestration is designed by humans and executed by systems.

| Layer | Owner | Function |

|---|---|---|

| Workflow design | Humans (architects, ML teams) | Define system structure and task boundaries |

| Routing layer | Software (rules or lightweight models) | Direct requests to the appropriate component |

| Specialist models | AI models | Perform narrow, task-specific operations |

| Monitoring & control | Humans + systems | Maintain quality, cost, and reliability |

A production system no longer generates a single response. It executes a controlled sequence of operations, each optimised for a specific constraint.

This is the architectural consequence of customisation.

Customisation Is a System Decision, Not a Model Tweak

Customisation is often described through techniques such as prompt design, retrieval-augmented generation, fine-tuning, or preference optimisation. These approaches shape how models behave, but they are not the core shift.

The real change is at the system level.

Companies are moving from asking which model to use toward deciding how a system should be structured to produce reliable outcomes under constraints. This includes decisions about how data is retrieved, how tasks are separated, how outputs are validated, and how failures are handled.

Modern platforms reflect this shift. They focus on serving multiple models, routing requests, evaluating outputs, and managing workflows rather than simply providing access to a single model.

Customisation defines how the system behaves, not just how the model responds.

Where General Models Still Fit

General models are not disappearing.

They are being repositioned.

They remain valuable in situations where tasks are unpredictable, where reasoning spans multiple domains, or where there is not enough high-quality data to safely specialise a system. In these cases, flexibility becomes more important than optimisation.

A useful comparison is transport systems. Cars did not eliminate bicycles or motorcycles. Different tools remain useful because the requirements differ.

General models are likely to follow the same pattern. They will handle open-ended reasoning and edge cases, while specialised models handle repetitive and structured tasks.

The system becomes layered rather than replaced.

What This Reveals About AI Systems

This shift changes how AI systems are built and how organisations operate them.

The focus is moving away from model capability alone and toward system control. As multi-model systems become standard, the value of AI is increasingly determined by how well a system manages evaluation, routing, monitoring, and failure handling.

This also changes the roles involved. The emphasis moves toward system design, orchestration, and operational governance rather than isolated model expertise.

Technology providers are already adapting. Instead of focusing only on larger models, they are investing in platforms that support model serving, routing, orchestration, and optimisation.

The model is no longer the center.

The system is.

What This Reveals About AI Model Customization

This is why AI model customization is becoming an architectural requirement.

The challenge is no longer to build a model that can do everything.

It is to build a system that can do one thing reliably, repeatedly, and under constraint.

Customisation is how that reliability is achieved.

My Take

This is a shift from general capability to controlled systems.

This is why AI model customization is not a feature upgrade, but a structural change in how systems are built.

The early assumption behind large-model deployment was that one model would gradually absorb more tasks over time. In practice, that approach becomes unstable once cost, reliability, and repeatability matter at production scale.

What replaces it is structure.

Instead of relying on one model to do everything, systems are broken into smaller components where each task is clearly defined and handled by the most appropriate model. This reduces variability and makes the system easier to operate, monitor, and improve.

It is not a more ambitious design.

It is a more stable one.

Sources

MIT Technology Review — Shifting to AI Model Customization Is an Architectural Imperative

https://www.technologyreview.com/2026/03/31/1134762/shifting-to-ai-model-customization-is-an-architectural-imperative/

Gartner — By 2027 Organizations Will Use Small Task-Specific AI Models Three Times More Than General-Purpose LLMs

https://www.gartner.com/en/newsroom/press-releases/2025-04-09-gartner-predicts-by-2027-organizations-will-use-small-task-specific-ai-models-three-times-more-than-general-purpose-large-language-models

AWS — Intelligent Prompt Routing for Amazon Bedrock

https://aws.amazon.com/bedrock/intelligent-prompt-routing/

McKinsey — The State of AI

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai