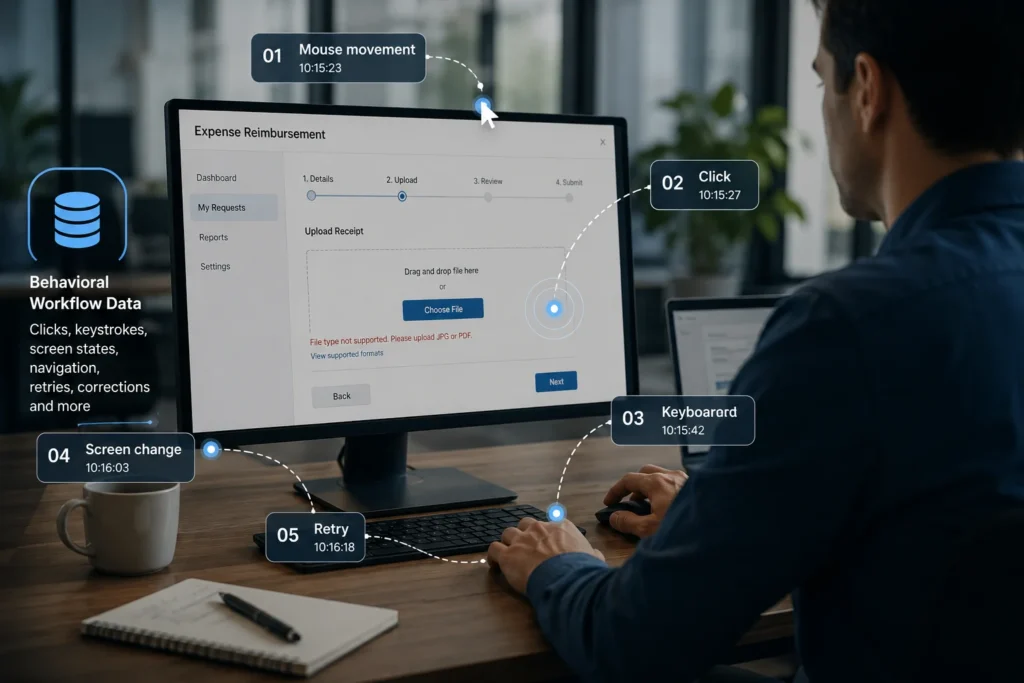

AI systems increasingly learn from behavioural workflow data such as clicks, retries, navigation paths, and task execution sequences. Image credit: KorishTech (AI-generated)

AI can read a manual and still fail at the job.

That is the core problem behind human behaviour data.

For years, most AI systems learned from static information: books, websites, documents, code repositories, and finished outputs. That training helped models generate text, answer questions, summarise information, and recognise patterns across enormous datasets.

But modern AI systems are increasingly expected to do something different.

They are expected to operate software, navigate workflows, interact with interfaces, recover from mistakes, and complete tasks inside changing environments.

That requires a different kind of learning.

AI human behaviour data captures how people move through tasks step by step inside real software environments.

A completed document only shows the result. Behavioural workflow data shows the path: the clicks, pauses, corrections, retries, shortcuts, and decisions that happened before the result appeared.

AI human behaviour data is becoming important because execution systems fail between steps, not just at the final output.

Recent reporting on Meta Platforms highlighted this shift clearly. In April 2026, Reuters, the BBC, and other outlets reported that the company had started collecting employee interaction data — including mouse movements, clicks, keystrokes, and occasional screen snapshots — to help train AI systems designed to perform office work.

The important part was not the monitoring itself.

It was what the monitoring revealed about where AI development is heading.

Instructions Tell AI the Goal, Not the Path

Most instructions describe work as if it unfolds cleanly.

Open the file. Verify the information. Submit the request.

But real work rarely behaves like that.

Consider a simple expense reimbursement process.

An employee uploads a receipt, notices the file format is rejected, checks a previous submission for guidance, switches to another system to confirm the expense category, corrects a date field, retries the upload, and only then submits the request successfully.

The finished reimbursement form hides almost all of that work.

The manual hides even more.

Written instructions usually describe the ideal process rather than the operational reality. They explain what should happen, not what people actually do when systems become confusing, incomplete, inconsistent, or resistant.

A policy document may simply say “attach receipt and submit request.” It does not show what happens when the receipt exceeds the file limit, when the expense category is unclear, or when the user checks previous submissions to avoid rejection.

That missing layer matters more than it first appears.

Real work contains hesitation, retries, workarounds, interruptions, invisible checks, and small judgment calls that experienced workers make automatically. People constantly adapt to interface limitations, missing information, changing conditions, and imperfect systems.

Most of that adaptation never appears in formal documentation.

But it appears clearly in behavioural workflow traces.

This is why AI human behaviour data is becoming increasingly valuable for execution-focused AI systems.

A sequence of pauses before clicking a button may indicate uncertainty. Repeated switching between tools may reveal hidden workflow dependencies. A retry after a failed upload shows how users recover from friction inside the system.

This is the kind of operational context static instructions remove.

And it is increasingly the context AI execution systems need.

AI Learns Real Work One State Change at a Time

Modern AI execution systems do not simply need to know what a successful answer looks like.

They need to learn what action usually follows a specific situation.

That is why state-dependent decisions matter.

A workflow trace records how a task unfolds over time: clicks, navigation paths, keyboard shortcuts, retries, corrections, screen changes, interruptions, and transitions between different system states.

The important part is not the isolated action itself.

It is the relationship between actions and changing environments.

In the reimbursement example, uploading the receipt changes the system state. That new state determines which actions become valid next. If the upload fails, the next possible actions change again.

To a human worker, this adjustment feels obvious.

To an AI system, it must be learned.

The same click can mean completely different things depending on the surrounding context. Clicking “submit” after all required documents are attached is different from clicking “submit” before validation is complete. The visible action may look identical, but the surrounding state changes the meaning entirely.

This is why execution systems are fundamentally different from traditional chatbot systems.

A chatbot mainly predicts plausible language.

An execution system must navigate sequences of state-dependent decisions across changing environments.

Researchers increasingly describe this as learning what happens next inside a workflow environment. The system is not only learning the final outcome. It is learning how one action changes the environment and constrains the next available action.

This is one reason workflow learning has become more important in AI agents, software copilots, robotics, and automation systems.

The challenge is no longer simply producing a convincing answer.

It is maintaining reliable behaviour across multiple connected steps.

The Final Output Hides Where the Work Actually Happened

A finished output compresses the entire process into a single visible result.

A completed reimbursement request does not show:

- which errors appeared

- what information was missing

- which system was checked first

- where the user hesitated

- what was retried

- or how problems were resolved

But these hidden moments are often where execution systems fail first.

Two employees may produce the same final output while following completely different workflows. One may complete the task smoothly in two minutes. Another may struggle through multiple retries, corrections, and interface checks before reaching the same result.

Static outputs flatten those differences into one endpoint.

Behavioural workflow data preserves the path.

That difference changes what the AI system is actually learning.

Traditional output-focused training teaches models what successful results look like. Behavioural workflow learning teaches systems how success was achieved step by step.

Static instructions describe the goal. Behavioural workflow data captures the path toward that goal.

One compresses work into a finished outcome. The other preserves the sequence of decisions, corrections, retries, and environmental changes that happened along the way.

That distinction matters because execution systems are not judged only by the final answer. They are judged by whether they can move reliably through the process required to reach it.

Execution systems do not usually fail because they cannot imagine the final answer.

They fail because they choose the wrong next action before they get there.

Why AI Human Behaviour Data Matters for AI Agents

This shift becomes clearer when comparing AI agents with traditional chatbots.

A chatbot mainly generates responses.

An AI agent must:

- operate interfaces

- navigate software tools

- monitor changing conditions

- recover from interruptions

- continue tasks across multiple stages

- and adjust behaviour as environments change

That requires behavioural workflow data.

AI human behaviour data helps agents learn how actions unfold across changing interface states and operational workflows.

Recent OpenAI guidance on agent systems increasingly focuses on workflow traces, trace grading, and step-level evaluation. Instead of evaluating only the final answer, developers increasingly analyse the sequence of actions the system followed while attempting the task.

That reflects a larger industry shift.

Execution systems are now judged not only by what they produce, but by how reliably they move through workflows.

This shift also explains why AI agents increasingly rely on orchestration and workflow control systems to complete multi-step tasks reliably.

This is also why interface-based AI systems have become a major challenge.

Graphical user interfaces contain dropdown menus, hidden states, navigation paths, keyboard shortcuts, pop-up interruptions, and environment-specific conditions that do not reduce neatly to text.

An instruction manual may describe the workflow generally, but it cannot fully capture how people adapt when the interface behaves unexpectedly.

That is where behavioural traces become valuable.

Meta’s reported employee-monitoring initiative reflected exactly this problem. According to reporting from Reuters and the BBC, the company wanted behavioural interaction data because AI systems still struggle with real software interaction tasks that humans handle routinely.

The issue was not language generation.

It was execution reliability inside operational environments.

This challenge is becoming increasingly important as companies develop:

- software agents

- automation copilots

- GUI-based AI assistants

- enterprise workflow systems

- and autonomous execution tools

The more AI systems operate inside real environments, the more behavioural workflow learning becomes necessary.

Human Behaviour Data Can Teach the Wrong Workflow

Behavioural learning also creates new problems.

Real human behaviour is inconsistent.

The same task may be completed differently depending on:

- experience

- stress

- tool familiarity

- personal habits

- risk tolerance

- or organisational culture

That makes behavioural workflow data noisy.

An employee may use an inefficient workaround simply because the official process is frustrating. Another worker may rely on shortcuts that only function in narrow situations. A third may repeatedly retry actions because they misunderstood the interface.

If AI systems learn directly from those workflows, they may inherit the same weaknesses.

This is one reason observation is not the same as understanding.

A system can copy visible behaviour without understanding why the behaviour worked or when it should be applied. It may reproduce a shortcut successfully in one environment and fail completely in another.

A model can copy a workflow and still not know when that workflow should be used.

More behavioural data does not automatically solve this problem.

In some cases, it increases complexity.

A system trained on large volumes of inconsistent workflows may become more statistically consistent while still failing to develop reliable judgment.

This creates one of the biggest difficulties in behavioural AI systems.

The challenge is no longer only predicting language correctly.

It is interpreting messy human behaviour without copying the wrong operational logic.

The Bigger Shift Is From Language Prediction to Environment Interaction

The deeper shift behind all of this is structural.

For years, mainstream AI development focused heavily on internet-scale text prediction. Systems learned patterns across documents, conversations, and public information.

But execution systems require something different.

They require interaction with environments:

- software systems

- operational workflows

- interfaces

- enterprise tools

- physical systems

- and changing real-world conditions

That changes what valuable training data looks like.

Static text can describe a process, but behavioural traces show how humans actually move through tasks under real conditions.

This is why workflow learning is becoming increasingly important across:

- AI agents

- software copilots

- robotics

- workflow automation

- enterprise execution systems

- and interface-based AI tools

The challenge is no longer only generating correct outputs.

It is maintaining reliable behaviour across long sequences of decisions inside unpredictable environments.

That is a fundamentally different problem.

And it may require fundamentally different training data.

My Take

The important shift is not simply that AI systems are collecting more data.

It is that execution systems increasingly require data that shows how work unfolds over time inside real environments.

That changes what becomes valuable.

AI human behaviour data is valuable because it preserves the operational sequence behind real work instead of only storing finished outputs.

For years, internet text helped AI systems learn patterns in language. Behavioural workflow traces now help systems learn patterns in action.

But there is still a major limitation.

A system can observe behaviour without understanding why the behaviour worked, when it should change, or whether the workflow itself was flawed.

That creates a difficult problem for execution-focused AI.

Because copying human behaviour is not the same as developing human judgment.

This behavioural shift also expands on the execution-layer transition explored in our article on AI prosthetic arms and physical execution systems.

As AI systems move further into software environments, workflows, and operational systems, that gap between observation and understanding may become one of the defining constraints of the next generation of AI systems.

Sources

Reuters — Meta to start capturing employee mouse movements and keystrokes to train AI

https://www.reuters.com/sustainability/boards-policy-regulation/meta-start-capturing-employee-mouse-movements-keystrokes-ai-trai

BBC — Meta to track workers’ clicks and keystrokes to train AI

https://www.bbc.com/news/articles/cvglyklz49jo

OpenAI — Trace Grading

https://developers.openai.com/api/docs/guides/trace-grading

OpenAI — Evaluate Agent Workflows

https://developers.openai.com/api/docs/guides/agent-evals

UI-TARS Research — Pioneering Automated GUI Interaction with Native Agents

https://arxiv.org/abs/2604.19925

A Survey of Demonstration Learning

https://arxiv.org/abs/2303.11191

Learning Complex Sequential Tasks from Demonstration

https://www.cs.cmu.edu/~mmv/papers/08aamas-harini.pdf