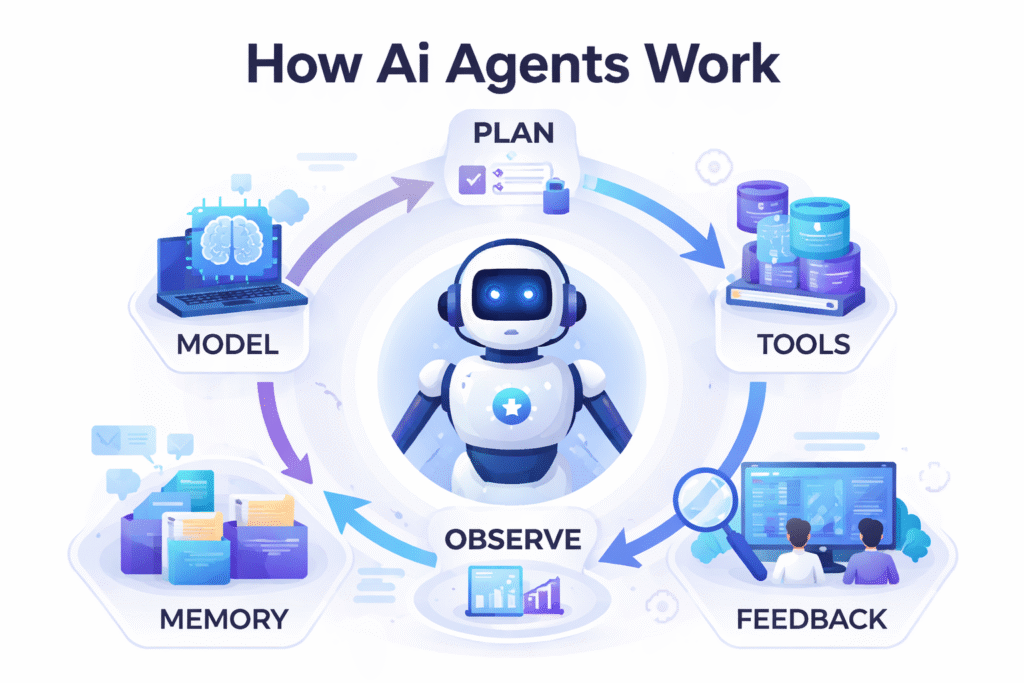

AI agents combine models, tools, memory, and orchestration to operate as structured systems rather than single responses. Image credit: KorishTech (AI-generated).

AI agents are systems that perform tasks by combining models, tools, and memory into a structured workflow.

Unlike chatbots, which respond to prompts, AI agents can take actions, retrieve data, and complete multi-step workflows.

For most people, AI still means typing a prompt and getting an answer.

But that interaction model breaks the moment a task requires more than one step. Writing an answer is one thing. Searching for data, checking it, updating a system, and verifying the result is something else entirely.

This gap is why AI agents are emerging.

They are not simply better chatbots. They are systems designed to carry a task forward — across steps, tools, and decisions — without requiring the user to manually coordinate everything.

AI Agents Are Not Just Models — They Are Systems That Work Across Tools

A model, in simple terms, is a prediction engine. It takes input and generates output — usually text — based on patterns it has learned. On its own, it does not perform actions in the real world.

AI agents are different because the model is only one part of a larger system.

A working agent typically combines a model that interprets instructions, tools that connect to external systems such as APIs or databases, memory that stores context and intermediate results, and an orchestration layer that decides what happens next.

Each part exists because the model alone cannot complete real tasks.

For example, in a customer support system, the model can understand the request, but it cannot retrieve account data or update a record. That requires tools. Once the data is retrieved, the system must remember what it found and decide whether to respond, escalate, or continue. That requires memory and orchestration.

This is what defines AI agents as systems rather than standalone models.

In this context, a system means a set of connected components that work together to complete a task, rather than a single model producing an answer.

AI Agents vs Chatbots: Why One Responds and the Other Operates

A chatbot is structured around a single interaction loop: input, response, stop.

That works for isolated questions. But it breaks down when a task requires continuity.

Take a simple example: “Where is my order?”

A chatbot can explain how to check order status. But it cannot access the actual order, verify delivery progress, or take action.

An AI agent can.

It can query the order system, retrieve status, compare expected delivery timelines, and decide whether escalation or resolution is required. The system continues operating after the first step instead of waiting for another prompt.

The difference is not conversational ability. It is whether the system can move from explanation into execution.

How AI Agents Work: The Plan–Act–Observe Loop

The core mechanism behind AI agents is a control loop.

Instead of producing a single answer, the system repeatedly evaluates what to do next based on the current state of the task.

In practice:

- Plan: interpret the goal and decide the next step

- Act: call a tool or perform an operation

- Observe: inspect the result

- Repeat: adjust based on feedback

This loop is visible in real systems.

In coding agents, the system reads code, identifies a problem, edits files, runs tests, and checks whether the issue is resolved. If not, the loop continues.

The key point is that the system does not assume the first answer is correct. It works iteratively, using results from each step to guide the next action.

This loop is the core mechanism behind how AI agents actually work in real systems.

Why AI Agents Are Used in Real Work (Customer Service Example)

Most business tasks are not single decisions. They are sequences.

Customer service is one of the clearest examples of this shift.

A traditional chatbot can answer frequently asked questions. It can explain return policies or provide general guidance. But the moment a request becomes specific, the limitations appear.

A request such as “Where is my order?” requires more than a response. It requires the system to retrieve account data, check delivery status, compare expected timelines, and decide whether escalation is needed.

This is where AI agents are being deployed in production systems.

Instead of responding with instructions, the system can query internal databases, retrieve the relevant order, check logistics data, and generate a response based on real information. In more advanced setups, it can also trigger actions such as refund processing or ticket escalation.

Customer service, coding assistants, and document processing systems are some of the most common AI agent examples used today.

This is already visible in real-world AI agent use cases, where systems increasingly handle tasks rather than just respond to prompts (see: How People Are Already Using AI Agents Without Realising It).

The difference is not better conversation. It is reduced coordination.

A support agent no longer has to switch between systems to gather information. The AI system performs those steps within a single workflow.

| Metric | Traditional Chatbot | AI Agent-Based System |

|---|---|---|

| Task Type | Single-step response | Multi-step workflow |

| Data Access | Limited to conversation | Integrated across systems (CRM, APIs, databases) |

| Resolution | Often partial | End-to-end handling possible |

| Human Intervention | High | Reduced for routine cases |

| Operational Flow | Manual coordination | System-driven execution |

Industry adoption reflects this shift. Reports from McKinsey and Salesforce show that companies are increasingly deploying AI in customer service not just for answering questions, but for handling complete workflows.

Why AI Agents Fail (And What Causes Errors)

The same structure that makes AI agents powerful also introduces risk.

Because tasks are executed step by step, an early mistake can affect everything that follows. If the system misinterprets the goal or retrieves incorrect data, subsequent actions may still look reasonable while leading to the wrong outcome.

For example, a coding agent may modify the wrong file based on a flawed assumption. Even if tests pass locally, the system may introduce issues elsewhere.

AI agents also depend on tool reliability, data quality, and clearly defined permissions.

If any of these fail, the system may continue operating without recognising the issue.

This is why production systems include validation layers such as test execution, rule checks, or human approval. The system is designed to act, but also to verify.

When Chatbots Work Better Than AI Agents

Not every task requires AI agents.

For simple, single-step tasks, a chatbot is often more efficient.

Examples include summarising a document, explaining a concept, or rewriting text.

These tasks do not require memory, external tools, or iterative reasoning.

AI agents introduce additional structure. They become useful only when the task requires coordination across multiple steps or systems.

Below that threshold, a single response is enough.

Why AI Is Moving From Chatbots to Agent Systems

AI agents highlight a broader shift in system design.

The focus is no longer on making a single model more capable. It is on building systems that combine multiple capabilities into structured workflows.

Across the industry, AI systems increasingly include specialised components, orchestration layers, and validation mechanisms.

The model becomes one part of a larger system rather than the system itself.

This is why modern AI products are increasingly built around workflows rather than single features.

This is the same structural direction seen in multi-model architectures and orchestration-based systems, where different models work together instead of relying on a single system (see: Why One AI Model Is No Longer Enough — And What Replaces It).

My Take

Before AI agents, most AI systems depended on the user to manage the workflow.

If a task required multiple steps, the user had to prompt the system repeatedly, interpret each output, and decide what to do next. The model provided answers, but the process itself remained manual.

AI agents change that boundary.

They do not introduce a fundamentally new form of intelligence. Instead, they shift responsibility for planning, sequencing, and verification from the user to the system.

For an individual user, this reduces friction. Tasks that once required multiple prompts become continuous workflows.

For a small company, it reduces operational overhead by removing the need to manually coordinate across systems such as CRM, support tools, and internal data.

For large organisations, it introduces a new architectural layer where workflows are designed around controlled automation rather than human handoffs.

For governments and regulated environments, it shifts the problem from capability to control — how systems are constrained, monitored, and audited once they are allowed to act.

Across all of these cases, the pattern is the same.

The value of AI is no longer defined by how well it responds, but by how reliably it can complete a task.

That is the real shift: from systems that generate answers to systems that execute work.

Sources

AI agents are increasingly described as systems that combine models, tools, memory, and orchestration to perform multi-step tasks rather than single responses.

- AWS — What Are AI Agents

https://aws.amazon.com/what-is/ai-agents/ - McKinsey — What Is an AI Agent

https://www.mckinsey.com/featured-insights/mckinsey-explainers/what-is-an-ai-agent - Google Cloud — What Are AI Agents

https://cloud.google.com/discover/what-are-ai-agents - Microsoft — Understanding AI Agents vs Chatbots

https://www.microsoft.com/en-us/microsoft-copilot/for-individuals/do-more-with-ai/general-ai/understanding-ai-agents-vs-chatbots - Salesforce — AI Agent vs Chatbot

https://www.salesforce.com/eu/agentforce/ai-agent-vs-chatbot/

Pingback: What Is Agent Orchestration? How AI Systems Coordinate Multiple Agents | KorishTech

Pingback: AI Human Behaviour Data: 7 Reasons AI Needs It | KorishTech