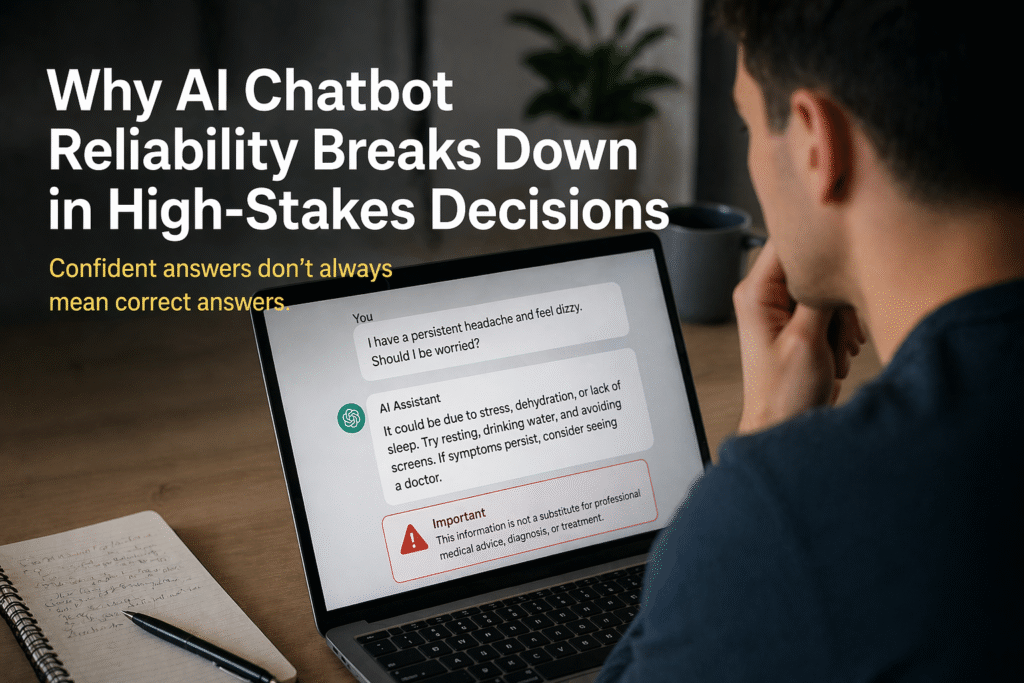

AI chatbot reliability breaks down in high-stakes decisions because answers are generated before they are verified. Image credit: KorishTech (AI-generated)

AI chatbot reliability drops sharply when these systems are used for medical advice and other high-stakes decisions. In controlled evaluations, chatbots were no better than traditional search in guiding users toward correct decisions, even though their responses appeared more structured and confident.

At first glance, this looks like an accuracy problem.

In reality, it is a structural problem.

Why This Feels Reliable — Until It Isn’t

Most users do not experience AI chatbots as unreliable.

They experience them as helpful, fast, and surprisingly clear. Answers are written in complete sentences, structured logically, and often include explanations that feel tailored to the question being asked.

This creates a strong signal of competence.

In human communication, clarity usually follows understanding. When someone explains something well, we assume they know what they are talking about. Over time, users begin to transfer that assumption to AI systems.

This is where the misalignment begins.

AI chatbots are not designed to signal uncertainty in a way humans naturally recognise. Instead, they produce responses that maximise coherence and fluency. The result is an answer that feels complete, even when it is not grounded in verified reasoning.

Reliability is not being measured. It is being inferred from how the answer sounds.

Chatbots Generate — They Do Not Validate

At a system level, AI chatbots are built to generate outputs, not to verify them.

When a user submits a question, the system processes the input and produces a response that best fits patterns learned during training. This process is highly effective at generating plausible and contextually appropriate language.

But there is a critical step missing.

After the response is generated, the system does not perform a second-stage check to determine whether the answer is correct, safe, or appropriate for the situation. There is no built-in mechanism that:

- compares the answer against authoritative sources

- checks alignment with formal guidelines

- evaluates whether the answer is complete or misleading

- assesses the potential consequences of being wrong

The output is produced and returned.

From the system’s perspective, the task is complete.

This is the core limitation behind AI chatbot reliability. The system is optimised for producing answers, not for confirming their validity before they are used.

This is the core reason why AI chatbot reliability breaks down in high-stakes situations.

Why This Limitation Stays Hidden in Everyday Use

In low-stakes scenarios, this design works well enough.

If a chatbot helps draft a message, summarise content, or suggest ideas, the user remains actively engaged in reviewing the output. Errors can be spotted, corrected, or ignored with minimal consequence.

In these cases, the system’s role is assistive. The user acts as the validation layer.

Because of this, the system appears reliable. It produces useful outputs quickly, and the cost of occasional inaccuracies is low.

The problem is that this experience does not generalise.

Users become accustomed to trusting the output, but the underlying system behaviour has not changed. It still generates answers without validating them. The only difference is that, in low-stakes contexts, the consequences of that design are not visible.

Why High-Stakes Decisions Expose the Gap

The same system behaves very differently when the cost of being wrong increases.

In healthcare, legal interpretation, or financial decision-making, answers are no longer suggestions. They influence real decisions. In these environments, an incorrect answer is not just inconvenient. It can lead to delayed treatment, legal exposure, or financial loss.

This is where the absence of validation becomes critical.

In some evaluations, nearly half of health-related responses — around 49.6% — were found to be incomplete, misleading, or not aligned with accepted medical guidance. These are not isolated failures. They are predictable outcomes of a system that does not check its outputs before returning them.

In addition, controlled studies have shown that even small changes in how a question is phrased can lead to different diagnoses or recommendations from the same chatbot.

Similar patterns appear outside healthcare. In legal contexts, studies have found that roughly 1 in 6 AI-generated responses included fabricated or non-existent citations, highlighting how confidently incorrect outputs can appear credible.

How Assumptions Break Across Contexts

| Context | What the User Assumes | What the System Actually Does |

|---|---|---|

| Low-stakes (email, summaries) | Output is a helpful suggestion | Generates text; user informally verifies |

| Health advice | Answer reflects medical reasoning | Produces plausible response without validation |

| Legal advice | Information is accurate and citable | May generate fabricated or incomplete references |

| Financial decisions | Recommendation is reliable | Outputs pattern-based suggestions without consequence awareness |

A Practical Example: Where Reliability Actually Breaks

Consider a user asking:

“I have a persistent headache and feel dizzy. Should I be worried?”

From the user’s perspective, this is a request for guidance. The expectation is not just information, but an assessment of whether the situation requires action.

The chatbot produces a response.

It may explain common causes, suggest rest or hydration, and provide general advice about when symptoms might require further attention. The answer is structured, calm, and coherent. It feels like a simplified version of medical reasoning.

But what is actually happening inside the system is very different.

- The response is generated from patterns in language, not from a clinical evaluation of symptoms

- The system does not check the answer against medical triage guidelines

- It does not evaluate whether the combination of symptoms indicates a serious condition

- It does not ask follow-up questions that a clinician would consider necessary

- It does not assess uncertainty in a way that meaningfully changes the response

Most importantly, it does not verify whether the advice it gives is safe to act on.

Even when answers appear reasonable, evaluations often rate them as only low to moderate quality compared to professional standards, reinforcing that fluency does not equal correctness.

The system produces an answer that resembles guidance, but it has not been validated as guidance.

If the user interprets that response as a reliable recommendation, the decision is now based on unverified output. The risk does not come from a single incorrect statement. It comes from the absence of any mechanism that determines whether the answer should be trusted in the first place.

This is where AI chatbot reliability breaks down.

This Cannot Be Solved by Better Models Alone

A common assumption is that improving AI models will resolve these issues.

Better models can produce more accurate responses on average. They can reduce obvious errors and generate more detailed explanations. But they do not change the fundamental process.

The system still generates an answer and stops.

This is also why systems increasingly rely on coordination layers to manage how and where decisions are made. As explored in How AI Orchestration Expands to Control Compute Layers, execution is no longer just about generating answers but ensuring they are handled within the right system structure.

Even a highly capable model can produce outputs that are:

- contextually inappropriate

- incomplete for the situation

- confidently misleading

because it is not required to verify them before returning them.

The limitation is not in how well the system generates language. It is in what happens after generation.

Without a validation layer, improvements in model quality increase fluency and plausibility, but do not guarantee reliability in high-stakes use.

What Changes When Validation Is Introduced

Reliability improves only when the system is extended beyond generation.

A validation layer changes the behaviour of the system in a fundamental way. Instead of treating the generated answer as final, the system introduces an additional step that evaluates whether the output meets certain criteria before it is delivered or acted upon.

This can include:

- checking responses against trusted data sources or guidelines

- enforcing constraints on what the system is allowed to answer

- identifying situations where uncertainty is too high to provide a direct response

- escalating decisions to human oversight when required

With validation, the system no longer treats every generated answer as acceptable. It introduces friction between generation and action.

This is what makes reliability possible in high-stakes environments.

As systems are used in more critical contexts, AI chatbot reliability becomes less about how answers are generated and more about whether they are validated.

My Take

The discussion around AI chatbot reliability often focuses on whether the answers are correct.

That is only part of the problem.

The deeper issue is that most chatbot systems are designed to produce answers, but not to verify them before they are used. In low-stakes situations, this works because the user absorbs the risk. In high-stakes decisions, that risk becomes visible.

Reliability does not come from generating better answers alone. It comes from building systems that decide whether an answer should be trusted at all.

Until that layer exists, AI chatbot reliability will continue to break down exactly where it matters most.

Sources

- BBC News — Using AI for medical advice ‘dangerous’, Oxford study finds

https://www.bbc.com/news/articles/cpd8l088x2xo - BBC News — AI chatbots give inaccurate medical advice (Oxford study)

https://www.bbc.com/news/articles/c3093gjy2ero - University of Oxford — New study warns of risks of AI chatbots giving medical advice

https://www.ox.ac.uk/news/2026-02-10-new-study-warns-risks-ai-chatbots-giving-medical-advice - JAMA Network Open — Accuracy and Reliability of Chatbot Responses to Physician Questions

https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2809975 - Journal of Medical Internet Research (JMIR) — Reliability of Medical Information Provided by ChatGPT

https://www.jmir.org/2023/1/e47479/ - BMJ / medical audit coverage — Study finds popular AI chatbots often give problematic health advice

https://www.news-medical.net/news/20260416/Study-finds-popular-AI-chatbots-often-give-problematic-health-advice.aspx - Society for Computers and Law — False citations – AI hallucination in legal contexts

https://www.scl.org/uk-litigant-found-to-have-cited-false-judgments-hallucinated-by-ai/

Pingback: Why AI Chatbot Confidence Fails in High-Stakes Decisions (3 Critical Risks) | KorishTech