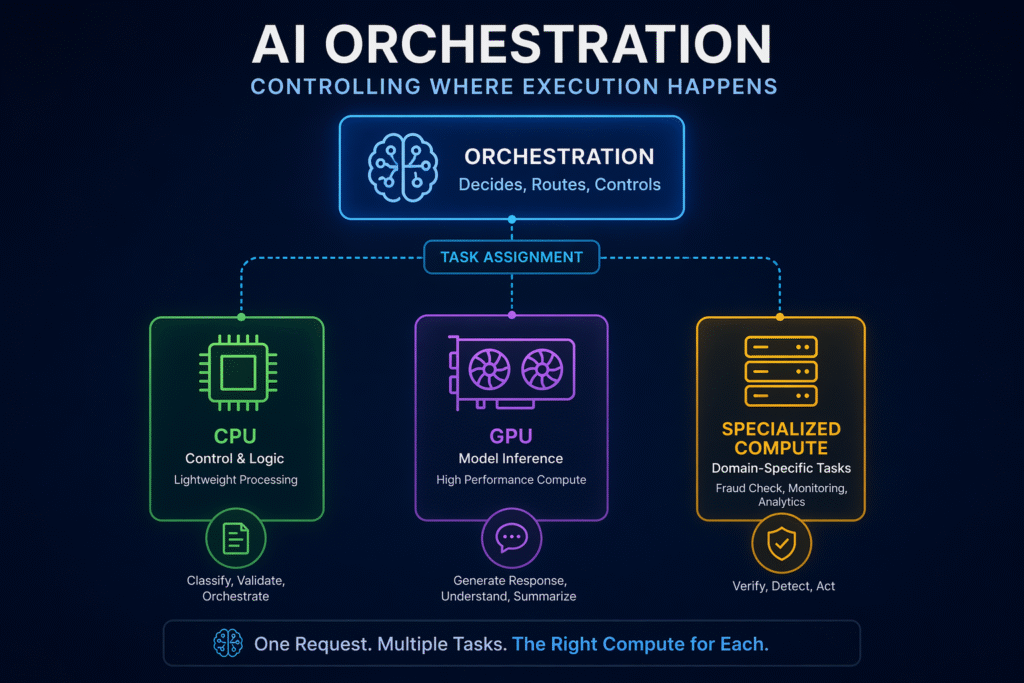

AI orchestration now decides where tasks run, controlling execution across multiple compute layers. Image credit: KorishTech (AI-generated)

AI orchestration is no longer just coordinating steps in a workflow. It is becoming the layer that decides where execution happens. It is becoming the layer that decides where execution happens.

This shift shows how AI orchestration now controls execution across modern systems.

Modern AI systems do not run in a single environment. They operate across CPUs, GPUs, and specialised compute, each designed for different types of work. As this structure becomes more complex, simply sequencing tasks is no longer enough. The system must decide, before execution begins, where each task should run.

This is the shift. Orchestration is no longer about ordering tasks. It is about controlling execution.

How AI Orchestration No Longer Just Coordinates Steps

In earlier systems, orchestration was simple. A workflow was defined, and tasks were executed in sequence.

A request would move from one step to another — data processing, model execution, output generation — without needing to consider where those steps ran. The system assumed a relatively uniform execution environment.

That assumption no longer holds.

As AI systems expanded to include multiple models, tools, and services, orchestration evolved to manage dependencies, retries, and task coordination. But even this version of orchestration remained focused on what happens next, not where it happens.

That boundary is now breaking.

The Decision Happens Before Execution

In modern systems, execution is not immediate. It is planned.

Before a task runs, the system evaluates what that task requires. It considers factors such as computational intensity, latency constraints, and resource availability. This evaluation happens before execution begins, not during it.

This introduces a new responsibility.

Orchestration must now determine not only the order of tasks, but also the environment in which each task should be executed. A task is no longer just “next in sequence.” It is assigned to a specific compute layer based on what it needs to function correctly.

This is what makes orchestration a control layer rather than a workflow layer.

A Practical Example: How Execution Is Actually Decided

Consider a customer support AI system handling a request:

“Where is my refund and why was my payment declined?”

In a simple system, this request might be passed directly to a model and returned as an answer.

In a modern system, orchestration breaks the task into parts and assigns them to different execution environments:

- The request is first classified using lightweight logic on CPU

- Relevant account and transaction data is retrieved from internal systems

- A model running on GPU generates an explanation based on that data

- A payment verification or fraud check may be triggered in a separate service

- The system then decides whether to return the response, retry, or escalate to a human

None of these steps are fixed to a single environment. Each is assigned based on what it requires.

This is the difference. The system is not just executing steps. It is deciding where those steps should run.

This is where AI orchestration becomes visible as a control layer rather than a simple workflow tool.

Orchestration Now Decides Where Tasks Run

Different parts of an AI system require different execution conditions.

Control logic and lightweight processing are handled by CPU environments. Model inference and training depend on GPU acceleration. More specialised tasks may require dedicated systems designed for specific problem types.

These environments are not interchangeable.

Orchestration is now responsible for mapping tasks to the correct environment. If a task requires parallel computation, it must be routed to a GPU-capable system. If it requires sequential control or coordination, it must remain in a CPU-based environment.

This builds directly on the constraint described in How AI Systems Decide Which Compute to Use. The difference is that orchestration now makes that decision explicitly.

It is no longer assumed. It is controlled.

This Requires a Global View of the System

To make these decisions, orchestration must understand the system as a whole.

It must be aware of:

- available compute resources

- current system load

- task requirements

- performance constraints

Execution happens locally. Orchestration operates globally. It sees the entire system and assigns work accordingly.

This global view allows orchestration to balance cost, performance, and reliability. Without it, tasks would be assigned blindly, leading to inefficiencies that accumulate across the system.

How Orchestration Has Changed

| Function | Earlier Orchestration | Expanded Orchestration |

|---|---|---|

| Main role | Sequence workflow steps | Decide both sequence and execution environment |

| Compute handling | Mostly fixed | Dynamically selected |

| System visibility | Local workflow view | Global system view |

| Resource use | Static or predefined | Adaptive to task and system state |

| Failure type | Workflow breaks | Workflow and compute mismatch both break system |

| Human role | Define steps | Define policy, boundaries, and escalation |

Real Systems Already Work This Way

This shift is already visible in large-scale AI infrastructure.

Platforms such as Amazon, Google, and Microsoft do not run AI workloads in a single environment. They operate across multiple compute layers and rely on orchestration to assign tasks appropriately.

In these systems, workflows are defined, but execution is not fixed. Tasks are dynamically placed onto available resources based on their requirements and system conditions.

This is why orchestration is closely tied to cloud infrastructure. It is not just coordinating software components. It is coordinating compute itself.

When Orchestration Gets This Wrong

When orchestration fails to assign tasks correctly, the system begins to degrade.

Tasks may be routed to environments that cannot support them efficiently. GPU resources may be used for tasks that do not require parallel computation, increasing cost without improving performance. CPU environments may become overloaded when high-throughput workloads are not offloaded correctly.

These failures do not remain isolated. As shown in Why AI Systems Fail Without Proper Compute Routing, incorrect execution decisions propagate across workflows, increasing latency and reducing system reliability.

The difference is that these failures now originate at the orchestration layer.

Trust, Reliability, and Control

As orchestration takes on more responsibility, trust in the system depends on how its decisions are controlled.

AI orchestration becomes more reliable not when it makes more decisions, but when those decisions are constrained, observable, and reversible.

This typically requires:

- clear routing policies

- visibility into system behaviour

- validation before and after execution

- fallback and retry mechanisms

- auditability of decisions

Without these controls, orchestration can introduce silent failures that are difficult to detect until they affect the entire system.

The Human Role Does Not Disappear

Even as orchestration becomes more capable, human control does not disappear. It changes.

Humans are still required to:

- define policies and constraints

- set acceptable trade-offs between cost, latency, and accuracy

- design escalation paths

- intervene in ambiguous or high-risk situations

Orchestration can manage execution within those boundaries, but it does not replace the need for human-defined control.

What Happens When Systems Fail or Become Unavailable

In real systems, orchestration must also handle failure conditions.

If a compute environment becomes unavailable due to overload, outage, or infrastructure issues, the system cannot simply stop. It must fall back to predefined behaviour.

This may include:

- rerouting tasks to alternative environments

- degrading gracefully with reduced functionality

- retrying execution under different conditions

- escalating to human operators

These fallback behaviours are not optional. They are part of what makes orchestration reliable in practice.

This Is Why Systems Become Multi-Compute

As orchestration expands, the structure of AI systems changes.

Systems are no longer designed around a single compute environment. They are built as multi-compute systems, where different layers handle different types of work.

Orchestration is what makes this structure possible.

It allows systems to integrate CPUs, GPUs, and specialised compute into a single workflow without hard-coding execution paths. Instead of fixed execution, systems become adaptive, assigning tasks dynamically based on context.

This is not an optimisation. It is a requirement for operating complex AI systems at scale.

As systems expand, AI orchestration becomes the central layer that determines how execution decisions are made.

My Take

The evolution of orchestration reveals a deeper shift in how AI systems operate.

The problem is no longer just coordinating tasks. It is deciding where those tasks should run.

This moves orchestration from a supporting role to a central control layer. It becomes the component that determines how the system behaves under real conditions.

As systems grow more complex, the number of possible execution paths increases. More compute types are introduced. More specialised components are added. And the system becomes increasingly dependent on making correct decisions before execution begins.

Orchestration is what makes those decisions possible at scale.

It is no longer just part of the system.

It is what allows the system to function as a system.

Sources

Multi-agent orchestration and AI system coordination (arXiv)

https://arxiv.org/html/2509.07571v1

https://arxiv.org/html/2601.13671v1

Cloud-based AI orchestration and pipelines

https://docs.aws.amazon.com/sagemaker/latest/dg/pipelines.html

Compute scheduling and GPU allocation (Kubernetes)

https://kubernetes.io/docs/tasks/manage-gpus/scheduling-gpus/

Distributed scheduling systems (Ray)

https://rise.cs.berkeley.edu/blog/ray-scheduling/

Cloud compute selection and infrastructure guidance

https://learn.microsoft.com/en-us/azure/cloud-adoption-framework/ai/infrastructure/compute

https://cloud.google.com/tpu

AI workload autoscaling and deployment

https://docs.cloud.google.com/vertex-ai/docs/predictions/autoscaling

Pingback: Why AI Chatbot Reliability Fails in High-Stakes Decisions (3 Critical Risks) | KorishTech