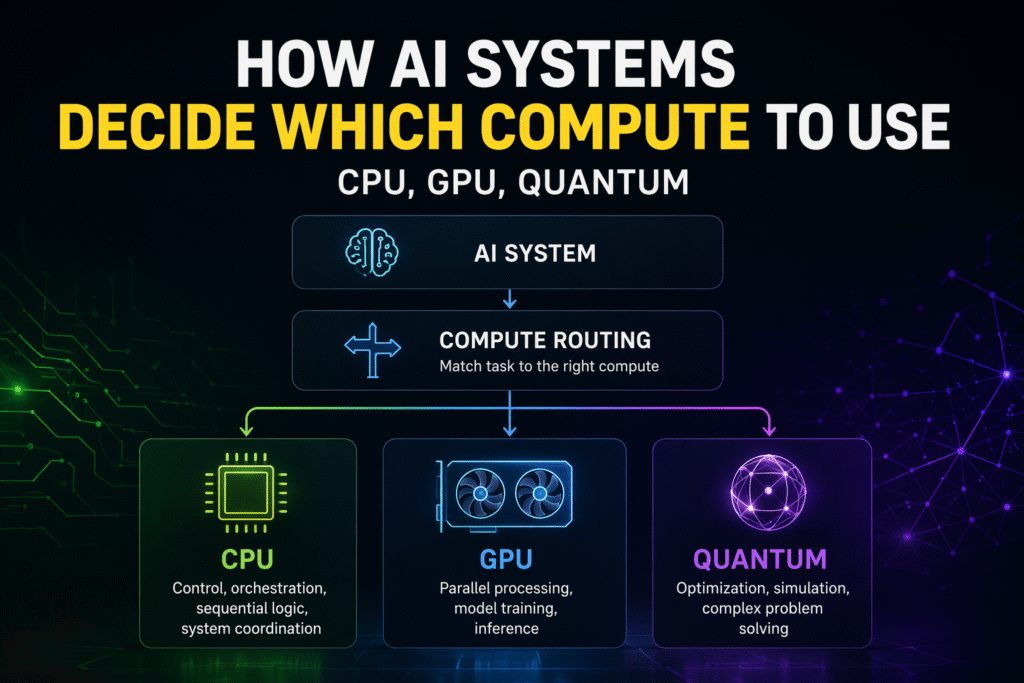

AI systems route tasks across CPU, GPU, and specialised compute layers based on task requirements. Image credit: KorishTech (AI-generated)

AI compute routing determines how AI systems decide whether each part of a task should run on CPU, GPU, or specialised compute.

AI systems do not operate on a single type of compute. They distribute execution across different compute layers, because each part of the workflow requires fundamentally different conditions to run effectively.

This is not a performance optimisation. It is a structural requirement.

Modern AI systems depend on a mechanism that determines where each part of a task should execute before the system can proceed. That mechanism is compute routing, where tasks are directed to the compute layer that matches their structure, constraints, and execution requirements.

Without this layer of decision-making, the system does not simply become inefficient. It becomes unstable.

AI Systems Are Built on Unequal Compute Roles

AI infrastructure is often described in terms of scale — more compute, faster models, larger systems. In practice, what matters is not how much compute exists, but how it is divided.

Different types of compute exist because different types of work cannot be executed in the same way.

CPUs manage control flow, sequencing, and system-level coordination. GPUs execute large-scale parallel computation, particularly for model training and inference. Specialised systems, including emerging quantum compute, are designed to solve narrow classes of problems such as optimisation or simulation.

These roles are not interchangeable. They exist because the structure of the task determines how it must be executed.

As a result, the system is not defined by a single compute resource, but by how multiple compute layers are combined.

This layered structure reflects the broader system design explained in What Is AI Infrastructure and Why Does It Matter?.

This is the core logic behind AI compute routing in modern systems.

Execution Moves Across Compute Layers, Not Through One System

This becomes clearer when observing how real systems behave in production environments rather than simplified examples.

Consider a modern AI coding system built on top of large language models.

A single request — for example, fixing a bug — does not execute within a single compute environment. Instead, it moves across different layers.

The system first interprets the request and analyses the codebase using GPU-backed models. The result is then passed back into a control layer that determines what should happen next. If a modification is required, the system generates new code through GPU inference. That output is then sent to a testing environment, often running on separate CPU-based or containerised systems. If validation fails, the system loops back and repeats the process.

Each step runs in a different environment because each step requires different execution conditions.

This pattern is not limited to coding systems.

In recommendation systems used by companies like Netflix or Amazon:

- user behaviour data is processed continuously through CPU-driven pipelines

- models generate recommendations using GPU infrastructure

- ranking logic, filtering, and business rules are applied again through CPU-based systems before results are delivered

In scientific workflows, such as drug discovery systems used by IBM:

- classical systems generate candidate molecules using GPU-based models

- specialised compute may be invoked to evaluate complex molecular interactions

- results are returned to classical systems for further iteration

Across all of these examples, the pattern is consistent.

The system does not run in one place. It moves across compute layers, with each step routed to the environment that can execute it correctly.

Compute Routing Follows Task Structure, Not Preference

The system does not choose compute based on availability or power alone. It selects compute based on the structure of the task being performed.

Tasks that involve sequential logic, branching decisions, and coordination must run on CPUs, because they depend on ordered execution.

Tasks that involve large-scale pattern recognition or neural computation must run on GPUs, because they require parallel processing across large datasets.

Tasks that involve optimisation, simulation, or combinatorial search may be routed to specialised compute, but only when the problem structure matches what those systems are designed to solve.

This is the constraint that defines compute routing. It is not about speed. It is about compatibility between the shape of the problem and the structure of the compute.

Which Compute Handles Which Task in AI Systems

| Task Type | CPU | GPU | Specialised Compute (e.g. Quantum) | Why |

|---|---|---|---|---|

| System control / orchestration | Yes | No | No | Requires sequential logic and coordination |

| Model training | Limited | Yes | No | Needs large-scale parallel computation |

| Inference (real-time) | Partial | Yes | No | Requires fast, scalable execution |

| Data processing / pipelines | Yes | Partial | No | Continuous, structured processing |

| Optimisation / simulation | Limited | Limited | Yes (conditional) | Matches specialised problem structures |

How AI Compute Routing Decides Before Execution

Execution in modern AI systems is not a single step. It is a sequence of decisions that determine where each part of a task should run before it is allowed to proceed.

This is becoming more visible as systems begin to incorporate specialised compute alongside traditional infrastructure.

Cloud platforms such as AWS and Microsoft already operate heterogeneous environments where:

- CPU instances handle control logic and orchestration

- GPU clusters handle model training and inference

- specialised accelerators are available for specific workloads

These systems are designed around the assumption that different tasks require different execution environments.

This becomes even more explicit with emerging quantum integration.

Companies like IBM and Microsoft are not replacing classical infrastructure with quantum systems. Instead, they provide quantum access through cloud layers, where quantum is invoked only for specific problem types such as optimisation or simulation.

In practice, this means a workflow runs primarily on classical infrastructure, while a specific subproblem is identified and routed externally to a specialised system. The result is then returned and reintegrated into the main workflow.

This is already visible in hybrid architectures, where quantum services act as external solvers rather than core execution environments.

The key point is not that quantum is widely used today, but that the system is already designed to decide when it should be used.

Compute routing is not theoretical. It is embedded in how modern AI infrastructure operates.

As Systems Expand, Routing Becomes a Core Constraint

Earlier AI systems could operate within simpler boundaries. A model would process an input, produce an output, and the system would stop.

Modern systems no longer behave this way.

They operate across multiple steps, multiple models, and multiple environments. They run continuously rather than episodically. They integrate tools, external data, and feedback loops.

As a result, the system is no longer executing a single task. It is managing a sequence of different task types, each with distinct compute requirements.

This is why compute routing is no longer an implementation detail. It becomes a structural constraint that determines whether the system can function at scale.

When Compute Routing Fails, System Reliability Breaks Down

Failures in AI systems are often attributed to model quality. In practice, many failures originate from incorrect execution decisions rather than model limitations.

These failures are visible in real systems.

In large-scale AI services, GPU infrastructure is sometimes overloaded with tasks that do not require parallel computation. When lightweight control or data-processing tasks are routed through GPU pipelines, the result is increased latency and cost without improving output quality.

In enterprise environments, attempts to simplify infrastructure by centralising workloads into a single compute layer often create instability. CPU-bound tasks are forced into GPU pipelines, while GPU workloads are underutilised due to poor scheduling. The system appears operational, but performance degrades under mixed workloads.

A similar pattern appears in early quantum experimentation.

In optimisation systems explored by companies such as D-Wave Systems and IonQ:

- problems must be reformulated to fit specialised execution constraints

- execution depends on limited and complex hardware access

- results must be translated back into classical systems

When these systems are applied outside their suitable problem domain, the result is not improved performance, but increased complexity, longer execution times, and higher cost.

These failures follow a consistent pattern:

- tasks are routed to inappropriate compute layers

- execution becomes inefficient or unstable

- errors propagate across subsequent steps

The system continues to produce outputs, but reliability, cost efficiency, and scalability degrade over time.

This is not a failure of intelligence.

It is a failure of routing decisions.

Compute Routing Extends the Role of Orchestration

Compute routing does not operate in isolation. It is part of a broader control layer that determines how execution proceeds across the system.

As AI systems move from single-model to multi-model architectures, orchestration becomes responsible not only for selecting models, but also for determining how different compute layers are used.

This extends the role of orchestration from coordinating tasks to controlling execution environments.

This shift is explored in What Is AI Orchestration?, where systems manage how tasks move across models and tools.

, where systems manage how tasks move across models and tools, and in Why One AI Model Is No Longer Enough — And What Replaces It, where execution is already distributed across specialised components.

Once multiple compute types are introduced, orchestration must manage both the flow of tasks and the selection of compute.

This becomes even more critical in multi-agent systems, as explained in Why Agent Orchestration Works.

At that point, AI compute routing becomes part of system control rather than a background optimisation choice.

My Take

The common assumption is that improving AI systems means improving models.

What this mechanism reveals is that system performance depends just as much on how tasks are executed as on what the models can do.

Compute routing exposes a deeper constraint. AI systems are not limited by capability alone, but by their ability to match each part of a task to the correct execution environment.

As systems become more complex — incorporating more models, more tools, and more specialised compute — the challenge shifts from building better components to coordinating those components effectively.

The question is no longer which model is best.

It is how the system decides what happens next, and where that decision is executed.

That is the layer that determines whether the system works at all.

Sources

IBM — Quantum Roadmap (toward fault-tolerant systems and hybrid usage)

https://www.ibm.com/roadmaps/quantum/

Microsoft — Azure Quantum (hybrid quantum-classical architecture model)

https://azure.microsoft.com/en-us/products/quantum/

AWS — Heterogeneous Compute & Quantum Services Overview

https://aws.amazon.com/braket/

NVIDIA — Accelerated Computing (CPU + GPU role separation in AI systems)

https://developer.nvidia.com/blog/

Google Cloud — AI Infrastructure & System Architecture

https://cloud.google.com/architecture/ai-ml

McKinsey — The State of AI (system-level deployment patterns and constraints)

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Pingback: AI Orchestration Explained: 3 Powerful Ways It Controls Compute Layers | KorishTech