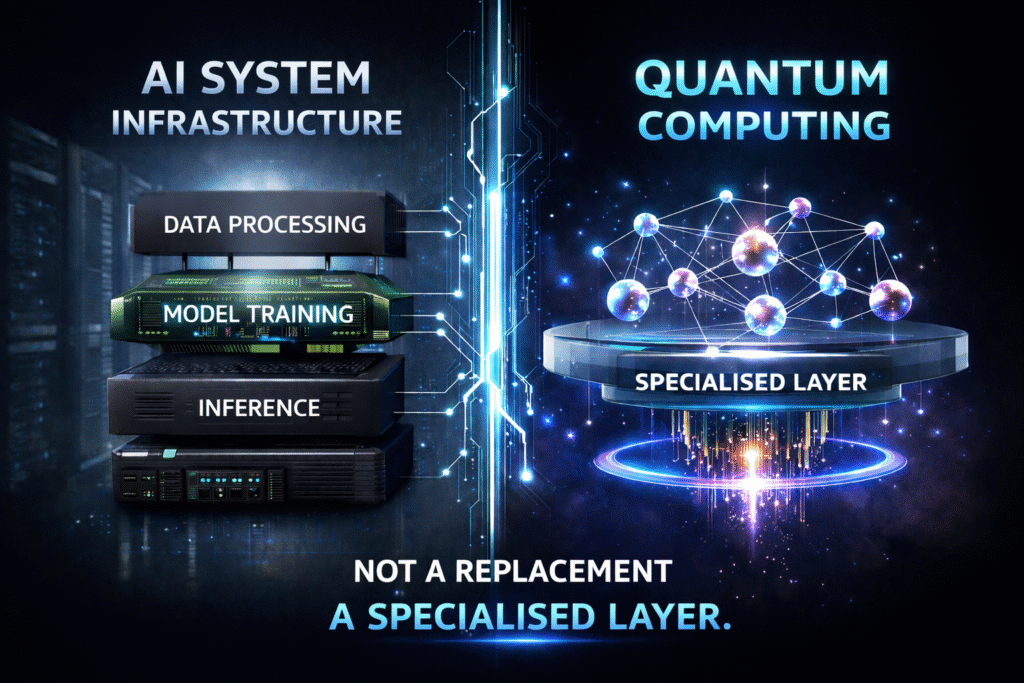

Quantum computing operates as a specialised layer within AI systems, not a replacement. Image credit: KorishTech (AI-generated).

Quantum computing AI systems are not being replaced. They are becoming more layered, because quantum computing operates as a specialised compute layer within AI infrastructure rather than replacing it.

The discussion around quantum still focuses on capability — faster computation, new problem-solving approaches, and potential breakthroughs. But inside real AI systems, the constraint is not capability alone. It is where that capability can actually operate without breaking the rest of the workflow.

This is why quantum computing AI systems cannot operate independently across the full AI workflow.

Once that constraint becomes visible, the architecture shifts in a predictable way: quantum is added to the system, but only in places where its strengths apply.

The System Does Not Start With Quantum

Consider how a real AI system operates in practice.

In a modern drug discovery pipeline, the process begins with large-scale biological data that must be collected, cleaned, and structured. Models are then trained on GPU infrastructure to learn patterns in molecular behaviour, after which AI systems generate candidate compounds that may have useful properties. This entire workflow depends on stable data processing, repeated training cycles, and continuous execution across distributed systems.

None of these stages naturally move to quantum computing. Not because quantum is incapable in theory, but because the system itself requires properties that quantum systems do not currently provide at scale, such as stability, repeatability, and high-throughput execution.

As a result, the system remains fundamentally classical at its core.

The Constraint Appears When the Problem Changes

The limitation becomes visible only when the nature of the problem shifts.

After candidate molecules are generated, the system reaches a stage where it must evaluate molecular interactions with a high degree of physical accuracy. At this point, classical approximation methods begin to struggle, particularly when the underlying problem becomes too complex to simulate efficiently.

This is where quantum computing becomes relevant. However, its relevance is conditional. It does not apply to the entire workflow, but only to this specific type of problem, where the structure of the task aligns with what quantum methods can handle.

This is the critical transition. Quantum does not enter because it is generally better. It enters because the problem has changed in a way that matches its strengths.

This Is Why Quantum Becomes a Solver, Not a System

Once introduced, quantum computing does not replace the surrounding infrastructure. Instead, it operates as a specialised component within it.

In the same drug discovery system, AI continues to generate candidate molecules using classical and GPU-based models. Quantum is then used, where appropriate, to evaluate specific molecular interactions that are difficult to approximate using classical methods. The results of that evaluation are passed back into the classical system, which continues the workflow.

This pattern reflects a broader architectural truth. Quantum is invoked only when a subproblem matches its strengths, produces a result, and exits the process, while the rest of the system continues to operate on classical infrastructure.

Why Quantum Computing AI Still Depends on Classical Compute

Outside of that narrow role, the rest of the system continues to depend on classical infrastructure.

Model training still requires large-scale matrix computation on GPUs. Inference systems must deliver reliable outputs in real time. Data pipelines must process and transform large volumes of information continuously. These are not isolated computations but ongoing processes that require stability and predictability.

This difference becomes clearer when broken down:

- AI training requires repeated, large-scale computation over datasets

- inference systems require fast, predictable responses

- production environments require stability and uptime

Quantum systems are not designed for these conditions. They remain sensitive to noise, constrained by hardware limitations, and dependent on controlled execution environments.

This constraint is also reflected in hardware progress. IBM’s roadmap still focuses on achieving fault-tolerant quantum systems, which shows that stable, large-scale execution is not yet available (IBM Quantum Roadmap). Until that threshold is reached, quantum cannot support continuous AI workloads in the way classical systems do.

This Forces the Architecture to Become Layered

Because quantum cannot take over the full workload, the system adapts by incorporating it as an additional layer.

Modern AI systems already rely on multiple forms of compute. CPUs handle control logic, GPUs handle large-scale parallel computation, and distributed systems manage data and scaling. Quantum computing is introduced into this environment as another specialised resource rather than a replacement.

This is how quantum computing AI systems become part of a layered compute architecture.

This is where quantum computing AI systems begin to operate as part of a layered architecture rather than a replacement.

This is already visible in real systems. Platforms such as AWS run hybrid workflows where classical infrastructure manages the overall process while quantum systems are called only for specific optimisation tasks. Similarly, Microsoft integrates quantum into its cloud stack as one compute option among many (Microsoft Azure Quantum), reinforcing that quantum operates as a layer within a broader system.

Once this happens, the problem changes. The system must now decide which compute layer should handle each part of the task. That decision introduces a new constraint: execution must be routed correctly, or the system becomes inefficient.

This reflects the same shift described in Why One AI Model Is No Longer Enough — And What Replaces It, where systems move from single approaches to specialised components working together.

This also introduces the coordination challenge explained in Why Agent Orchestration Works, where execution depends on routing tasks to the correct component at each step.

What Fails When This Constraint Is Ignored

Problems arise when quantum computing is applied without respecting its role as a specialised layer.

In optimisation use cases across logistics and financial modelling, attempts to apply quantum methods too broadly have revealed a consistent pattern. Problems must be reformulated to fit quantum-compatible structures, execution depends on limited hardware access, and results require translation back into classical systems. Each of these steps introduces additional complexity.

In practice, this creates a predictable failure pattern:

- problems are forced into quantum-compatible formats

- execution depends on scarce and complex hardware

- outputs require translation back into classical systems

If the problem itself does not benefit from quantum methods, the system incurs this overhead without gaining performance improvements. The result is slower execution, increased cost, and reduced reliability compared to classical approaches.

Replacement Fails, but Layering Works

Replacing an entire system requires a technology to perform all of its functions reliably.

Adding a layer requires only that it performs a specific function better than the existing approach.

This distinction explains why most infrastructure evolves through layering rather than replacement. GPUs did not replace CPUs, cloud computing did not eliminate on-premise systems, and AI did not remove traditional software architectures. Each introduced a new capability while leaving the underlying system intact.

Quantum computing AI follows the same pattern.

| System Function | Classical / GPU Systems | Quantum Systems | Why Replacement Fails |

|---|---|---|---|

| Data processing | Handles large-scale, continuous data pipelines | Not designed for data throughput | Cannot process bulk data efficiently |

| Model training | Runs iterative, high-volume computation | Not suited for repeated training loops | Lacks stability for continuous execution |

| Inference | Delivers real-time, scalable responses | Not viable for low-latency serving | Cannot support real-time systems |

| Optimisation / simulation | Limited by complexity at scale | Strong in specific problem structures | Only applicable to narrow tasks |

| System control | Managed through orchestration layers | Not applicable | Cannot coordinate execution |

What Does Not Change

Even as quantum computing improves, the core structure of AI systems remains unchanged.

In the drug discovery pipeline:

- data ingestion remains classical

- model training remains GPU-based

- deployment systems remain unchanged

- orchestration continues to control execution across components

Quantum affects only a specific part of the workflow, leaving the rest of the system intact. This reinforces the central constraint: the system is broader than the problems quantum is designed to solve.

This reinforces that quantum computing AI systems cannot replace the broader infrastructure.

This is why quantum computing AI systems remain dependent on classical infrastructure.

Why Quantum Computing AI Changes One Layer Only

The limitation is not how powerful quantum computing becomes, but how narrow its applicable domain remains.

Quantum can significantly improve certain types of problems, particularly where classical methods struggle with complexity or accuracy. However, AI systems are composed of many different types of tasks, most of which do not match the conditions under which quantum computing provides an advantage.

As a result, the architecture evolves by incorporating quantum into specific parts of the system, rather than replacing the system itself.

This follows the same structure explained in What Is AI Infrastructure and Why Does It Matter?, where AI systems operate as layered environments rather than single systems.

My Take

The expectation that quantum computing will replace AI infrastructure comes from viewing technological progress as a sequence of breakthroughs. In practice, system design evolves through integration, where new capabilities are added to existing structures rather than replacing them entirely.

Quantum computing makes this pattern visible. It introduces a powerful but narrow capability that must be positioned carefully within a broader system. This does not simplify AI architecture. It increases its complexity by adding another layer that must be managed and coordinated.

The real shift is not that quantum transforms AI systems on its own, but that AI systems are becoming more dependent on selecting the right type of compute for each task. As more specialised capabilities are added, the system becomes less about any single technology and more about how those technologies are combined.

This is why orchestration becomes necessary, as explained in What Is AI Orchestration?, where systems must control how different components are coordinated.

That is why quantum does not replace AI.

It changes one layer, and in doing so, makes system design more important than ever.