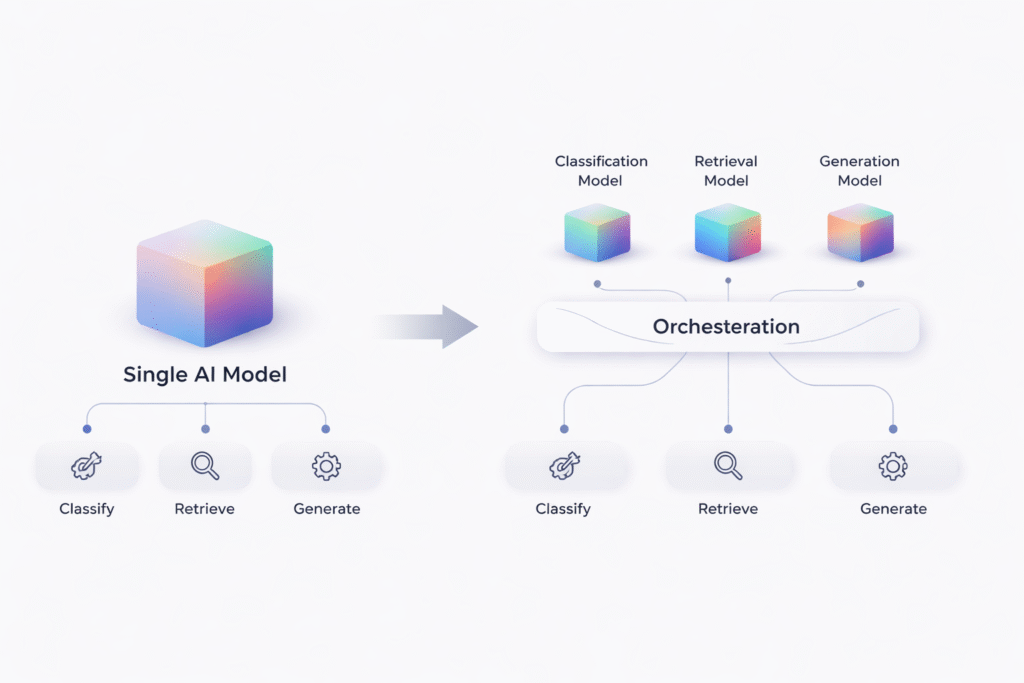

AI systems are shifting from a single general model to multiple specialised models coordinated within a structured system. Image credit: KorishTech (AI-generated).

One AI model was enough when AI systems were still treated like interfaces.

Today, AI multi-model systems are replacing that approach as production workflows demand consistency, cost control, and reliability.

A single model could answer questions, summarize documents, write code, and generate content through one API. That made early adoption fast. But production systems changed the requirement. A live support workflow, claims pipeline, or document processing system does not need a model that can do many things once. It needs a system that can do the same thing correctly, repeatedly, and at a cost that holds up under scale.

Gartner predicts that by 2027 organizations will use small, task-specific AI models at least three times more than general-purpose large language models. This is not because large models stopped improving. It is because accuracy drops when tasks become narrow, and costs rise when every request is handled at the highest capability level.

That is where the single-model design breaks.

One Model Worked — Until It Didn’t

The single-model design worked because it removed system complexity.

Models like GPT-4 or Claude could act as a universal interface, handling classification, reasoning, and response generation in one place. In early-stage applications, that flexibility was enough. A single API call could answer a question, summarize content, and generate structured output without requiring a pipeline.

But the same flexibility becomes unstable when the system must behave predictably.

In customer support prototypes, a single model can respond to user queries with acceptable quality. Once deployed in production, however, the system must follow policy, reference correct internal data, and maintain consistent formatting across thousands of cases. In practice, the model may provide a correct answer in one instance, but vary structure, omit required details, or introduce unsupported assumptions in another.

The issue is not that the model fails completely.

It is that it performs multiple roles simultaneously without boundaries.

Why One Model Fails in Real Systems

The failure is structural, not technical.

Production workflows combine tasks that require fundamentally different behaviours. A classification step demands a short, deterministic output. A generation step requires flexible language. A compliance step must follow strict rules. When a single probabilistic model handles all of these roles, consistency breaks.

Even when outputs are technically correct, they are not consistent enough to reuse or validate automatically. Format changes, tone shifts, and structural drift make outputs unreliable in repeated workflows.

In regulated workflows such as insurance claims or financial reporting, even small variation in wording or structure can trigger rejection or manual review.

This creates downstream problems. Systems cannot depend on outputs behaving the same way across similar inputs. Automated validation becomes difficult. More importantly, failures cannot be isolated. If a single model misinterprets a request, that error propagates through the entire workflow.

What appears as a model issue is, in reality, a system design problem.

The System Is Actually Multiple Tasks

The shift begins when the system is observed more closely.

A production workflow is not a single task. It is a sequence.

A document processing system does not simply “understand” a document. It identifies the document type, extracts structured fields, validates those fields, and produces an output in a defined format. Each of these steps has different inputs, constraints, and accuracy requirements.

In customer support systems, the same structure appears. The system must first identify the issue, retrieve the correct policy or knowledge base, generate a response, and then ensure that the answer meets required standards before it is delivered.

Once this structure becomes visible, the system no longer looks like a single problem.

It becomes a chain of tasks.

This is task decomposition. It is not an optimisation. It is a correction of how the system actually operates.

Different Tasks Need Different Models

Once tasks are separated, the limitation of a single model becomes clearer.

Models are optimised for broad language behaviour. They perform well in flexible, open-ended tasks. Production workflows, however, require specific behaviours that are often incompatible within one system.

Classification needs speed and determinism. Extraction requires structured outputs. Validation demands strict rule-following. Generation allows flexibility. These are not variations of the same behaviour. They are fundamentally different requirements.

This is why many enterprise systems use lightweight classifiers or rule-based filters before invoking a larger model, rather than relying on one model to interpret everything.

The mismatch becomes clearer when each task is compared directly:

| Workflow step | Required behaviour | Failure pattern with single model |

|---|---|---|

| Classification | Fast, deterministic decision | Over-explains or misclassifies edge cases |

| Retrieval grounding | Accurate, up-to-date context | Hallucinates or uses outdated info |

| Response generation | Fluent, adaptive language | Acceptable but variable |

| Validation / compliance | Strict, rule-based | Ignores constraints or drifts in format |

This is not a weakness of intelligence.

It is a mismatch between model behaviour and workflow requirements.

AI Multi-Model Systems Replace the Single-Model Design

The solution is not improving prompts.

It is restructuring the system.

Instead of relying on one model, systems assign each task to a specialised component. A classification step is handled separately from retrieval. Retrieval is separated from generation. Generation is followed by validation.

Each component operates within a defined boundary.

This removes ambiguity and improves control. The workflow becomes composable, where each part can be adjusted without affecting the entire system.

The model is no longer the system.

It is one part of it.

This is why AI multi-model systems are becoming the default structure in production environments.

Orchestration Becomes the Control Layer

Once multiple components exist, coordination becomes the central function.

Orchestration is the layer that controls how the system operates. It determines which task is executed, which model is used, how outputs move between steps, and how failures are handled.

This is what is often referred to as harness engineering in practice.

In modern systems, orchestration is designed by humans and executed by software. Routing rules determine which model handles each request. Evaluation layers decide whether outputs are acceptable. Monitoring systems track performance and cost.

Platforms like AWS Bedrock already implement this logic by routing simpler requests to cheaper models and reserving stronger models for complex cases. The result is not only cost reduction, but more stable system behaviour.

The system is no longer reactive.

It is controlled.

Why This Reduces Cost and Improves Reliability

This architectural shift aligns resources with task complexity.

This shift closely connects to the broader move toward efficiency-focused AI systems, where cost and performance are optimised together rather than scaled blindly.

In a single-model system, every request is processed at the cost of the most powerful model. In a multi-model system, simple tasks are handled by cheaper models, while complex tasks use more capable ones.

AWS reports that routing requests to the most appropriate model can reduce costs by up to 30% without compromising performance.

| Scenario | Cost behavior | Why |

|---|---|---|

| Single-model system | High for all requests | Same model used regardless of task complexity |

| Multi-model system | Lower overall | Simple tasks routed to cheaper models |

Reliability improves for the same reason. Each task is isolated, making outputs easier to validate and errors easier to contain. Failures can be corrected at specific steps instead of affecting the entire workflow.

The system becomes predictable.

Where One Model Still Works

The single-model approach remains effective when tasks are unstructured and variability is acceptable.

Conversational systems, coding assistants, and exploratory tools benefit from flexibility. In these environments, the goal is not strict reproducibility, but usefulness across a wide range of inputs.

This is why general-purpose models continue to play a role.

The difference is that production workflows require control, while exploratory systems tolerate variation.

What This Reveals About AI Model Customization

This shift changes how AI systems are built.

This change reflects a deeper transition from standalone tools to structured AI infrastructure, where systems are designed to operate continuously rather than respond in isolation.

The focus moves from model capability to system control. Instead of selecting one model, companies design workflows that combine multiple components, each aligned to a specific task.

Gartner predicts the rise of task-specific models. AWS builds routing into its infrastructure. Microsoft formalises orchestration patterns. McKinsey highlights the importance of operational processes and human validation in successful AI deployment.

The model is no longer the center of the system.

The structure is.

This shift shows how AI multi-model systems are replacing single-model approaches at the system level.

My Take

The idea that one model could handle everything was not wrong. It was just early.

Before systems were structured, AI was used as a general interface. One model sat at the center, and everything—classification, reasoning, generation—was pushed through it. When it worked, it felt powerful. When it failed, the response was usually to improve prompts or switch to a better model. The assumption was that capability would solve the problem.

It didn’t.

As workflows became real—customer support, document processing, compliance systems—the limitation became clear. The issue was not whether the model could answer. It was whether the system could control how the answer was produced. That is what forced the shift.

What is often referred to as “harness engineering” is not a new model or a standalone discipline. It is a way of describing this transition from using a model directly to controlling how models are used within a system. In practice, it is closer to orchestration: defining tasks, assigning components, setting constraints, and managing flow between them. The term itself is still emerging and not consistently defined across the industry, but the underlying idea—control over execution rather than reliance on a single model—is already standard in production systems.

What changes after this shift is not just architecture, but behaviour.

Instead of one model trying to do everything, systems now combine multiple components. This can appear in different forms: multiple models inside one product, multiple agents coordinating tasks, or layered systems where retrieval, generation, and validation are separated. The exact implementation varies, but the structure is consistent. Tasks are decomposed, roles are defined, and execution is controlled.

This is also why the conversation around “AI tools” can be misleading. A single tool may appear unified on the surface, but internally it often operates as a system of components. It may use smaller models for routing, larger models for reasoning, retrieval layers for context, and validation layers for control. The article is not about tools versus models. It is about how systems are built underneath them.

The impact depends on who is using the system.

For an individual user, the change is mostly invisible. Tools feel more consistent, faster, and more reliable, even if the underlying system is more complex.

For small companies, the shift introduces a trade-off. Using a single model is simpler to build, but harder to control at scale. Moving to structured systems improves reliability, but requires more design effort and operational thinking.

For large companies, this is no longer optional. Cost, compliance, and consistency force systems to adopt task-specific models and orchestration layers. This is why infrastructure platforms now focus on routing, monitoring, and control rather than just model access.

For governments and regulated sectors, the implication is even stronger. Systems must be auditable, reproducible, and explainable. A single probabilistic model cannot meet those requirements alone. Structured, multi-component systems become necessary not for performance, but for accountability.

The shift is often described as moving from “better models” to “better systems,” but that understates what is happening.

The model is no longer the product.

The system is.

And once that changes, the question is no longer which model to use.

It is how the system is controlled.

Sources

MIT Technology Review — Shifting to AI Model Customization Is an Architectural Imperative

https://www.technologyreview.com/2026/03/31/1134762/shifting-to-ai-model-customization-is-an-architectural-imperative/

Gartner — By 2027 Organizations Will Use Small Task-Specific AI Models Three Times More Than General-Purpose LLMs

https://www.gartner.com/en/newsroom/press-releases/2025-04-09-gartner-predicts-by-2027-organizations-will-use-small-task-specific-ai-models-three-times-more-than-general-purpose-large-language-models

AWS — Intelligent Prompt Routing for Amazon Bedrock

https://aws.amazon.com/bedrock/intelligent-prompt-routing/

Microsoft — AI Agent Design Patterns and Orchestration

https://learn.microsoft.com/en-us/azure/architecture/ai-ml/guide/ai-agent-design-patterns

CIO — IT Leaders See Business Potential in Small AI Models

https://www.cio.com/article/3974073/it-leaders-see-big-business-potential-in-small-ai-models.html

McKinsey — The State of AI

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Pingback: What Is AI Orchestration? How AI Systems Control Workflows | KorishTech

Pingback: Why Agent Orchestration Works: How AI Systems Coordinate Multiple Agents | KorishTech

Pingback: Quantum Computing AI: 1 Reason It Doesn’t Replace AI Systems | KorishTech

Pingback: AI Compute Routing Failure: 3 Critical Reasons AI Systems Break | KorishTech